Executive summary (as of Sept 2025)

- Frontier models: OpenAI GPT-5 shipped (Aug 7), framing a two-tier “smart/fast + deeper reasoning” system with a router; early tests show both impressive capability and familiar safety frictions (rapid jailbreaks, harmful content edge-cases). Google Gemini 2.5 continued rolling updates (including 2.5 Flash Image “nano-banana”), and Anthropic released Claude 4 with “extended thinking.” OpenAIOpenAIblog.google+1Anthropic

- Autonomous science: Stanford & Chan Zuckerberg Biohub published a Virtual Lab multi-agent system where an AI “PI” orchestrates specialist AI agents for end-to-end biological discovery. Stanford MedicineCZ Biohub

- Compute & capital: NVIDIA’s Jensen Huang projects $3–4 trillion in global AI-infrastructure spend by 2030; the EU launched “InvestAI” to mobilize tens of billions for European AI. Reuters+1NCF InternationalOpenAI

- Governance & safety: OpenAI published GPT-5’s system card and a safe-completions approach; the UK AI Safety Institute (AISI) and India’s AISI advanced evaluation agendas. OpenAINHS EnglandGOV.UKIndiaAI

- Work & society: Developers’ AI adoption keeps climbing (84% use/plan to use; 51% of pros daily), while trust and collaboration frictions persist; knowledge-work surveys show rising productivity and “human-agent team” patterns. Education ministries began codifying classroom AI guidance. Stack Overflow+1MicrosoftEducation Hub

1) Major model releases & technical announcements

1.1 OpenAI GPT-5 (released Aug 7)

What shipped

OpenAI introduced GPT-5 as a unified system: a fast “smart” model for most queries, a deeper reasoning model for hard problems, and a real-time router to pick between them. The launch emphasized multimodality and safety mitigations. OpenAIOpenAI

Safety posture & evaluations

OpenAI published a system card (Aug 13) detailing red-teaming, bio-risk safeguards, and safety controls; the launch post cites 5,000+ hours of testing with partners (including the UK AISI). Independent security write-ups (and early “jailbreak” demonstrations) highlight that harmful outputs remain possible under adversarial prompting—consistent with prior frontier releases. OpenAIOpenAIYahoo FinanceGartner

Early industry/academic reaction

Coverage ranged from policy-focused explainers of GPT-5’s content-safety limits to security analyses showing post-release jailbreaks and “prompt-based exploit chains,” reinforcing the tension between openness and risk reduction. NHS EnglandGartner

How it compares right now

- Anthropic Claude 4 (May 22): “Extended thinking,” parallel tool-use, and strong software-engineering scores (e.g., SWE-bench Verified leadership claims). Available via API/Bedrock/Vertex with Opus 4 and Sonnet 4 tiers. Anthropic

- Google Gemini 2.5: Natively multimodal with very long context (1M tokens now; larger in roadmap). Summer updates bring Deep Think mode, Project Mariner computer-use, and a big image-editing/generation upgrade (2.5 Flash Image, a.k.a. “nano-banana”), now in API/Vertex; consumer Gemini apps also received image-editing features. blog.google+1Google Developers BlogGoogle CloudAndroid Central

Bottom line: On paper, all three frontier stacks now expose sustained-reasoning modes and agent-centric tooling. Differences show in tooling ecosystems (OpenAI’s assistants/agents, Anthropic’s Claude Code & MCP, Google’s AI Studio/Vertex), pricing, and guardrail strategies—and in how quickly red-teaming findings translate into mitigations. AnthropicGoogle Developers Blog

1.2 Autonomous multi-agent science (Stanford × CZ Biohub)

A Nature paper and institutional releases describe a Virtual Lab wherein a human creates a “PI agent” that recruits/manages specialist AI agents (e.g., hypothesis, methods, critic roles). The system executed realistic scientific cycles and reported candidate therapeutic designs—demonstrating how agentic orchestration can compress discovery loops. Dark ReadingStanford MedicineCZ Biohub

2) Development trends & application directions

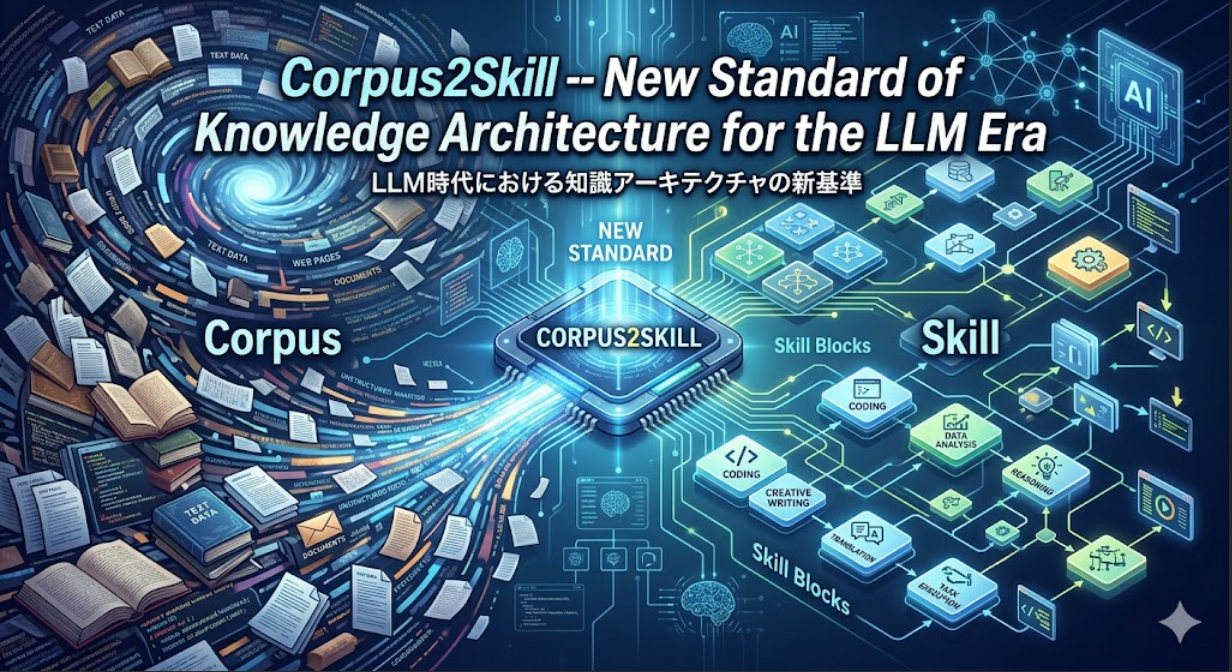

2.1 Skills pipelines & prompt/AI training

The UK announced TechFirst (≈£187 m) to expand AI/digital skills across schools and the workforce (with 7.5 million workers targeted in partner programs). Parallel efforts span regional training (e.g., West Midlands free AI upskilling) and private-sector offerings (e.g., SAS free AI training). The Department for Education also published guidance on safe/efficacious classroom AI use. Learning NewsTech XploreGovernment TransformationSASEducation Hub

Note: The branding “AI Skills Academy” is used by various providers; the policy thrust is a multi-track national skills push (government + industry), not a single academy. Workplace Insight

2.2 Edge-AI: TinyML & federated learning

- TinyML communities report continued growth (summit tracks on low-power vision/MLP-mixers); market analysts project Edge-AI TAM climbing toward the $40–45 B range by 2030. GOV.UKThe Independent

- Federated learning moves from pilot to production in sectors handling sensitive data; 2025 reviews survey advances in personalization, privacy, and cross-silo deployments. GOV.UK

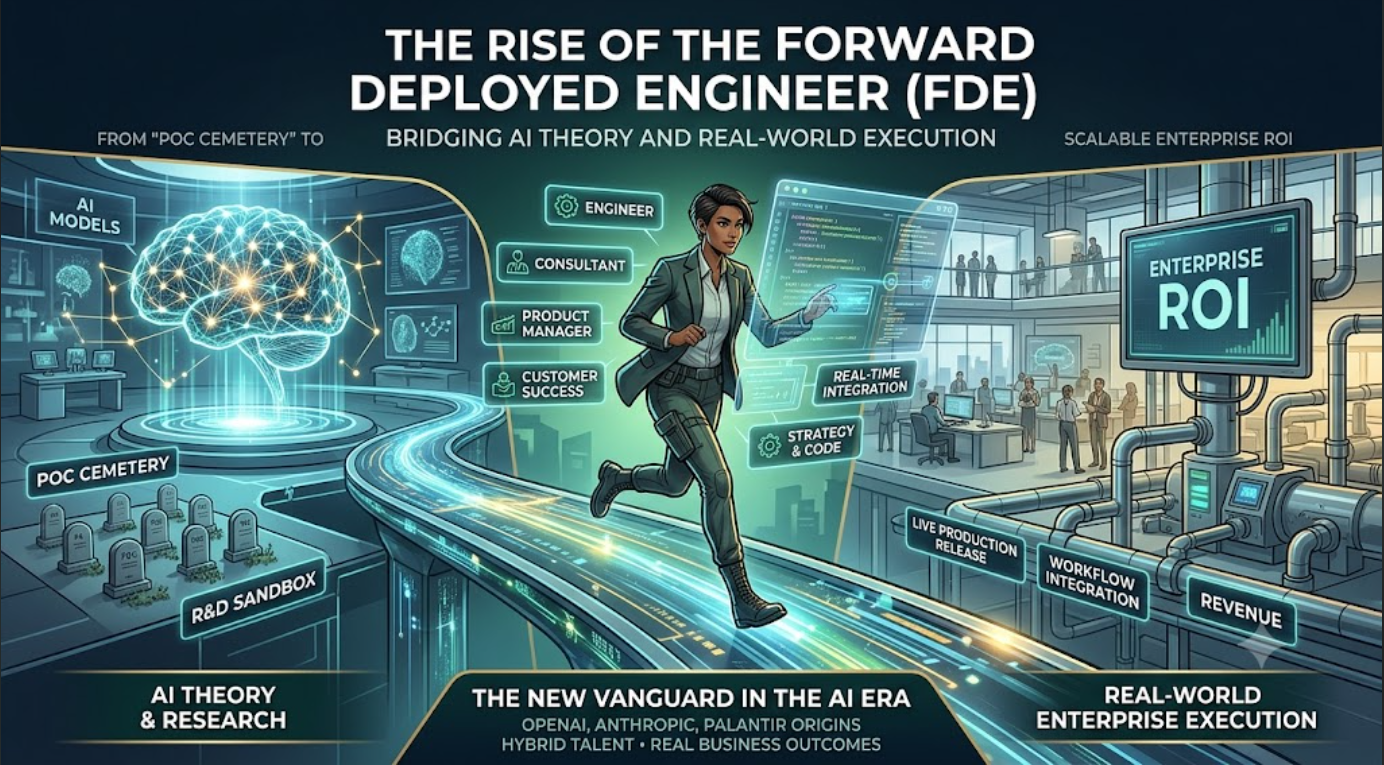

2.3 “Vibe coding,” agents & practical utility

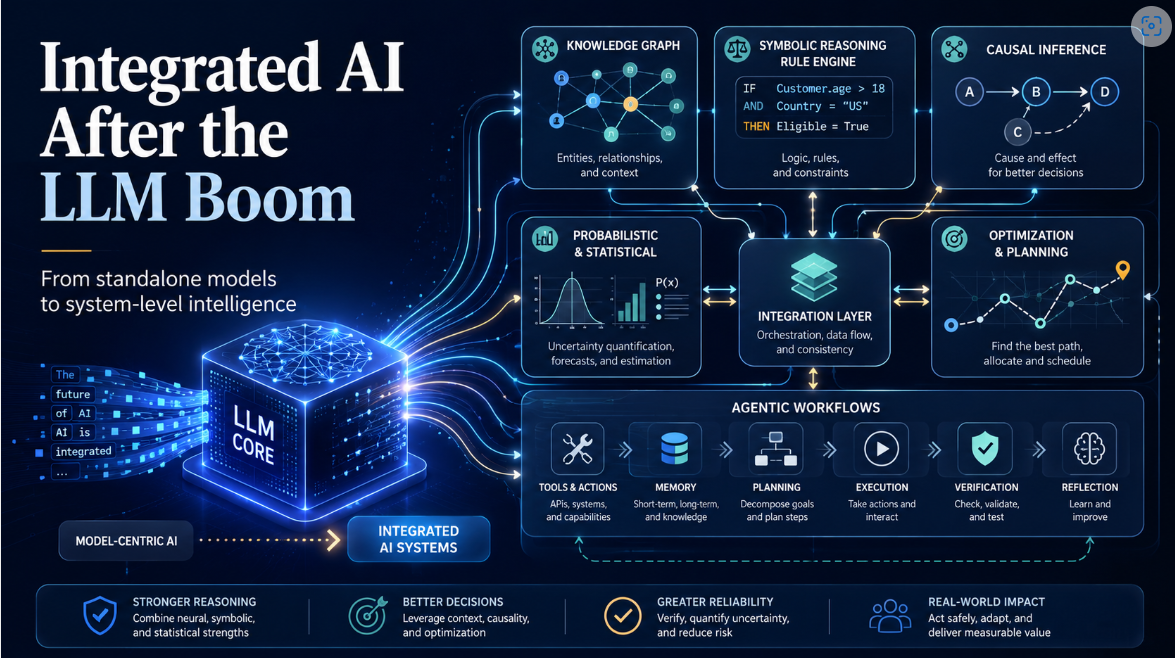

“Vibe coding” (prompt-first, exploratory app building) moved mainstream: Google’s dev blog explicitly says demo apps were “vibe coded in AI Studio.” Meanwhile, introductions to agentic AI (multi-tool, goal-pursuit systems) and industry pieces argue agents are shifting from assistants to teammates in longer workflows. Google Developers BlogWikipediaSimon Willison’s Weblog

3) Industry investment & infrastructure expansion

- NVIDIA outlook: Post-earnings, CEO Jensen Huang reiterated a $3–4 trillion global AI-infrastructure build-out by 2030 (and “AI boom far from over”), despite near-term volatility and China uncertainty. Prior keynotes pitched “AI factories” and sovereign-AI buildouts as multi-trillion industries. Reuters+1NVIDIA Blog

- Europe’s “InvestAI”: The European Commission launched InvestAI to “mobilise massive digital investments,” providing guarantees and equity to de-risk AI projects and to crowd in private capital—positioned as a tent-pole of the EU’s AI competitiveness strategy. OpenAI

- Public–private partnerships: The UK announced £1 billion AI compute expansion, a Sovereign AI Industry Forum, and new NVIDIA-anchored centers; multiple EU/UK initiatives aim to scale public compute, training civil servants, and facilitate secure access for startups. Financial Times

4) Regulation, ethics & safety

- GPT-5 vulnerabilities & mitigations: OpenAI’s safe-completions approach and system card detail guardrails (e.g., disallowed content classes, bio-risk testing); nonetheless, early jailbreak reports (security researchers, trade press) surfaced within days, underscoring ongoing red-team/patch cycles typical of frontier deployments. NHS EnglandOpenAIYahoo FinanceGartner

- Institutions & governance:

- UK AISI continued publishing evaluation tooling and synthesized global risk literature via the International AI Safety Report (Jan). TechCrunchGOV.UK

- India’s AI Safety Institute (under the IndiaAI Mission) advanced through consultations and partner calls (MeitY; Jan–May updates). IndiaAI+1indiaai.s3.ap-south-1.amazonaws.com

- EU AI Act moved into the implementation/enforcement phase, with analyses noting regulatory timelines and sector-specific obligations. Reuters

5) Impact on work & society

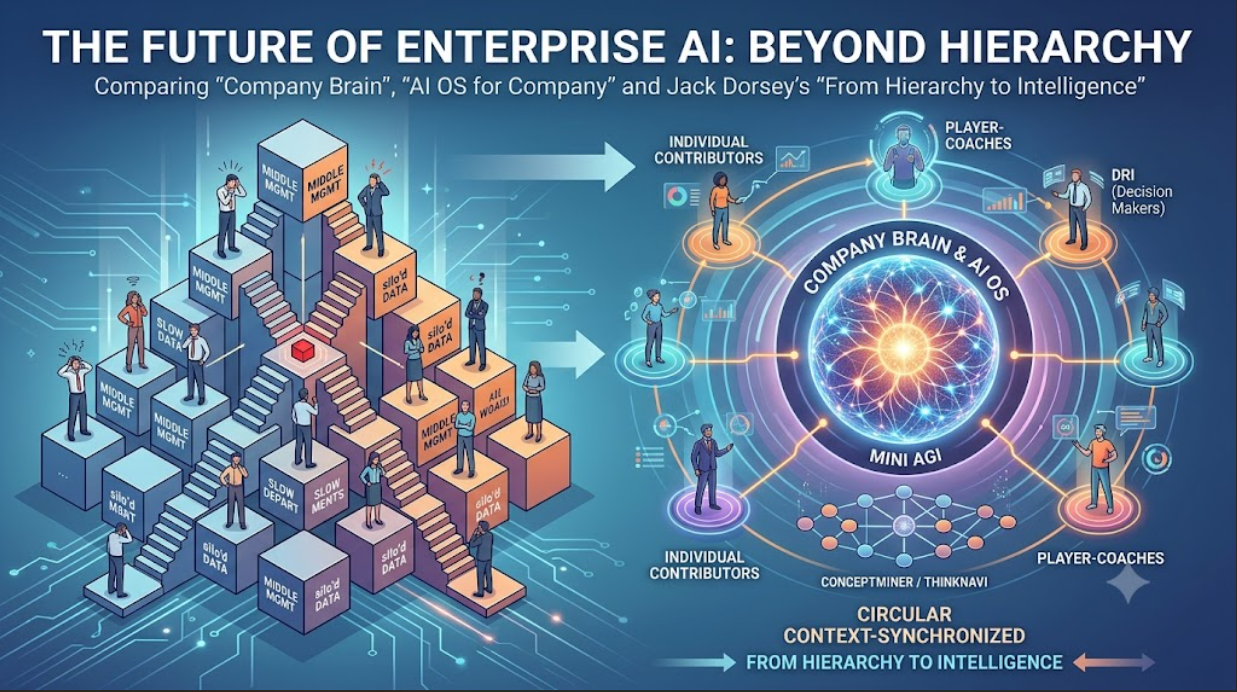

Developer & knowledge-worker usage

- Stack Overflow 2025: 84% of respondents use/plan to use AI; 51% of pros use AI daily. Adoption is high, but trust remains mixed; learning patterns and new AI-specific skills ramped year-over-year. Stack Overflow+1Stack Overflow Blog

- Microsoft Work Trend Index (WTI): Surveys of 31,000 workers across 31 countries, telemetry signals, and interviews describe the “Frontier Firm”—organizations re-architecting workflows around human-agent teams. Microsoft+1

- Enterprise productivity: Prior GitHub/Accenture field studies (still widely cited) measured up to 55% faster coding with AI pair-programming; newer 2025 commentaries highlight code-quality trade-offs and the importance of review culture. The GitHub Bloggitclear.com

Education & healthcare

- Education (UK): Department for Education guidance clarifies permitted classroom uses; Parliamentary debates and professional bodies released complementary practice notes. Education HubHansardMy College

- Healthcare: U.S. HHS progressed work on responsible-AI standards and governance in health contexts (coverage summarizing current initiatives), while national health systems trial ambient scribing and clinical-documentation agents under safety constraints. Reuters

6) Meta-analysis & near-term strategic outlook

Optimism vs. skepticism

- Optimism: Frontier vendors highlight reasoning gains, agent-ready toolchains, and sustained demand; NVIDIA forecasts a $3–4T infra build-out by 2030. Reuters

- Skepticism: Scholars and professionals caution against over-interpreting capability leaps and labor-impact claims; reporting this month captures fields (e.g., historians) articulating limits of current models for nuanced reasoning. The Washington Post

Themes to watch (next 6–18 months)

- Model miniaturization & edge: TinyML + on-device NPU growth; federated/privacy-preserving training unlocks regulated domains. The IndependentGOV.UK

- Energy & efficiency: Hardware roadmaps (e.g., NVIDIA Rubin/Blackwell) and scheduling/compilation stacks aim at TCO per token; expect sustainability metrics to appear more prominently in model cards. PC Gamer

- Robust alignment & evals: Expect expanded pre-deployment testing by institutes (UK/US/India) and vendors’ system cards—with continuous red-team → patch cycles. GOV.UKIndiaAIOpenAI

- Regulation: EU AI Act implementation, sector rules (health, finance, education) and watermarks/synth-ID-style provenance (Google expands use in consumer apps). ReutersAndroid Central

- Human-AI collaboration: “Frontier Firm” patterns—AI embedded into process, not just tasks; agentic workflows mainstreamed in IDEs, office suites, and vertical tools. MicrosoftAnthropic

Detailed references (selected)

Frontier models & capabilities

OpenAI GPT-5 launch & system card; safety approach. OpenAIOpenAINHS England

Anthropic Claude 4 announcement (benchmarks, extended thinking, coding). Anthropic

Google Gemini 2.5 updates: model card, blog (Deep Think, Mariner), developer release of 2.5 Flash Image; consumer release notes and press. Google Cloud Storageblog.google+1Google Developers BlogGeminiAndroid Central

Autonomous science, multi-agent

Nature paper & Stanford/CZ Biohub press on Virtual Lab; GEN News summary. Dark ReadingStanford MedicineCZ BiohubGEN

Skills & training

UK TechFirst/workforce training, DfE classroom guidance, regional skills offers. Learning NewsTech XploreEducation HubGovernment Transformation

Edge AI

IoT Analytics Edge-AI market outlook; federated-learning surveys. The IndependentGOV.UK

Industry investment

NVIDIA earnings/press commentary on $3–4T by 2030; EU InvestAI framework; UK sovereign-AI initiatives. Reuters+1OpenAIFinancial Times

Safety & regulation

UK International AI Safety Report; UK AISI tooling; India AISI announcements. GOV.UKTechCrunchIndiaAI

EU AI Act implementation coverage. Reuters

Work & society

Stack Overflow 2025; Microsoft WTI; GitHub/Accenture study; commentary on risks/quality trade-offs. Stack Overflow+1MicrosoftThe GitHub Bloggitclear.com

Strategic implications (for researchers & industry)

- Product: Design for agentic workflows end-to-end (planning → tool use → review → logging), not isolated prompts. Bake in evaluation harnesses that mirror AISI-style tests; assume continuous hardening after launch. AnthropicGOV.UK

- Platform: Balance frontier APIs with on-device/edge paths for latency/privacy; plan for federated/peered learning where data can’t move. GOV.UK

- People: Pair adoption with skills programs and process redesign (the “Frontier Firm” playbook), not just tool rollouts. Track trust and code-quality KPIs alongside speed. MicrosoftStack Overflowgitclear.com

- Policy: Expect enforceable sector rules (EU AI Act + national regulators), provenance requirements, and institute-led pre-deployment evals to shape product gates. ReutersGOV.UK

- Capital: Align roadmaps with a multi-trillion infra build-out and emerging public funds (InvestAI) to co-finance compute, data pipelines, and safety tooling. ReutersOpenAI

Notes & caveats

- Some August items (e.g., NVIDIA guidance, Gemini updates, GPT-5 safety write-ups) are evolving; expect revisions as system cards, model cards, and institute evaluations update. Where multiple outlets reported similar facts, priority was given to primary sources (vendor blogs, model/system cards, official government or institutional releases) and major wire services (Reuters).