— Industrial Reorganization Triggered by Nemotron 3 and the Recurrence of Internet History

1. Nemotron 3 as a Turning Point

NVIDIA’s announcement of Nemotron 3 carries significance beyond the release of yet another high-performance language model. What it fundamentally signals is that large-scale language models of near state-of-the-art capability are becoming technically feasible outside the cloud. Until recently, the trajectory of generative AI assumed massive data centers, centralized compute, and API-based delivery. Users accessed “intelligence” remotely, under conditions defined by pricing models, usage limits, and evolving contractual terms.

Nemotron 3 challenges this assumption. It does not mean that every user will immediately run the full model on an ordinary PC. Rather, when combined with techniques such as distillation, quantization, and mixture-of-experts architectures, it makes it plausible that a level of cognitive performance comparable to today’s frontier models can be experienced locally within a few years. The importance of this shift lies not in raw benchmark scores, but in its implications for the structure of the AI industry.

2. When and How Local LLMs Will Spread

As of late 2025, local LLM usage has already begun, though primarily among developers, researchers, and highly technical users. At this stage, hardware requirements, deployment complexity, and operational expertise still present significant barriers. However, the direction of technological progress is clear. By 2026, high-performance local LLMs are likely to become practical on high-end PCs and Apple Silicon-class devices. By 2027 or 2028, they are expected to function as everyday cognitive tools even on laptop-class hardware.

The critical point is that the decisive factor is not whether the absolute frontier model itself migrates to local hardware. For most users, what matters is whether everyday intellectual tasks—writing, planning, analysis, and decision support—can be carried out without dependence on centralized cloud inference. That threshold is rapidly approaching.

3. Industrial Reorganization Driven by Localization

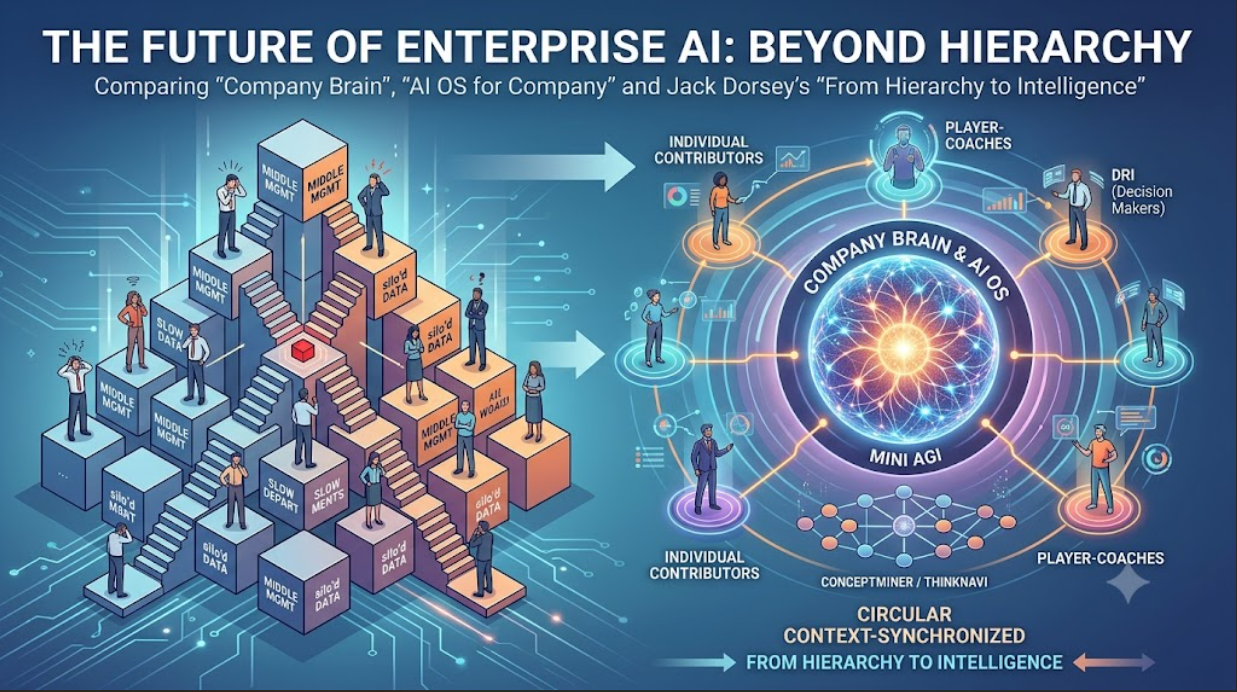

Once local inference becomes commonplace, the AI industry faces a gradual but irreversible reorganization. The most immediate consequence is the collapse of the implicit assumption that AI must be delivered as a cloud-based SaaS. Many existing AI services are built on the premise that intelligence is executed remotely and monetized via API calls or subscriptions. As inference moves to the edge, generic AI chat services, summarization tools, and text-generation utilities will increasingly be absorbed into operating systems and devices.

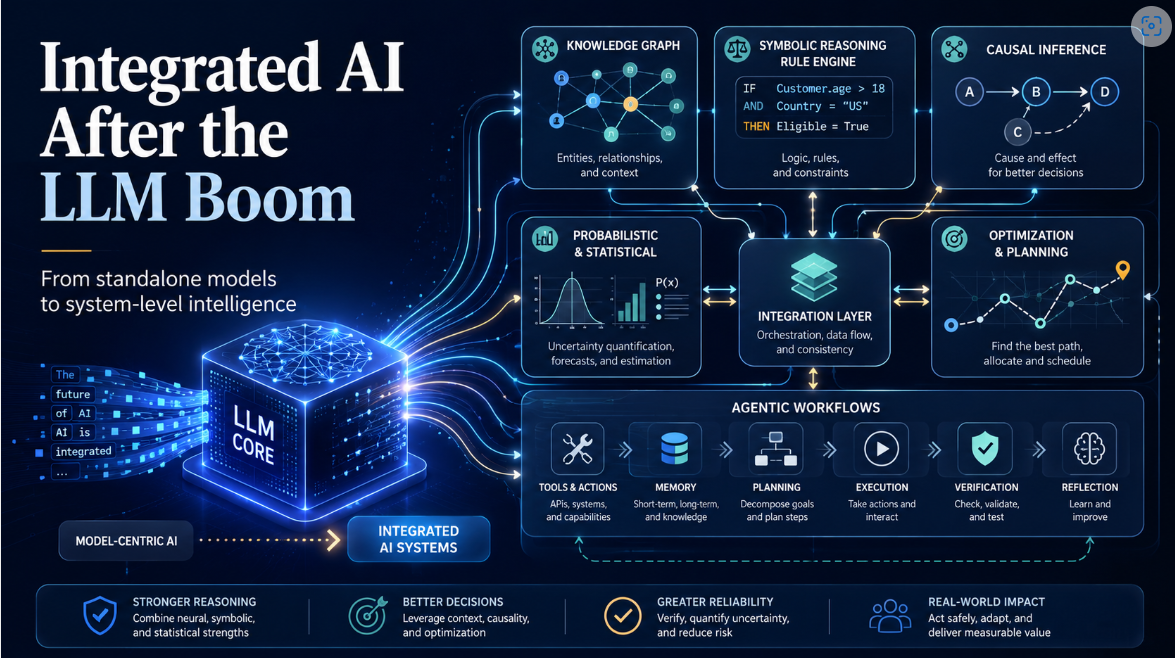

This does not imply the end of SaaS itself. Rather, it marks the end of SaaS models whose sole value proposition is the execution of AI inference. Cloud infrastructure will remain indispensable where local execution is structurally insufficient. Domains such as cross-organizational knowledge sharing, long-term memory, causal modeling, auditability, and accountability will continue to require centralized or hybrid architectures. The emerging division of labor can be summarized as local inference combined with cloud-based structure and governance.

4. The Shift Toward Enterprise and Government Markets

Given the capital intensity and regulatory complexity of frontier AI development, it is economically rational for major AI vendors to pivot away from mass consumer services toward enterprise and government markets. This shift raises a familiar concern: will AI become monopolized by large incumbents, leaving no room for startups?

This question echoes debates from an earlier technological epoch.

5. Lessons from Internet History

During the early days of the internet, it was widely believed that its horizontally distributed architecture would create equal opportunities for individuals and small firms. In a technical sense, this expectation was fulfilled: anyone can still publish content and access information. Yet the business reality evolved differently. Core functions—search, advertising, commerce, and visibility—became concentrated in the hands of a few dominant platforms.

The internet thus demonstrated a structural pattern: democratic access can coexist with highly concentrated value capture. Technical decentralization does not guarantee economic decentralization.

6. AI as a Historical Analogy—and a Critical Difference

AI is likely to follow a similar pattern. Superficially, access to AI tools will remain widespread. Structurally, however, power will accrue to those who control the entry points and frameworks through which cognition is mediated. The crucial difference lies in the nature of mediation itself. Search engines assisted human judgment; AI systems increasingly participate in judgment.

Once AI enters the domain of decision-making, questions of responsibility become unavoidable. In fields such as medicine, law, public administration, and corporate governance, it is neither socially nor institutionally feasible for a single AI provider to supply universally valid judgments. This constraint fundamentally limits the possibility of complete centralization and distinguishes AI from earlier internet platforms.

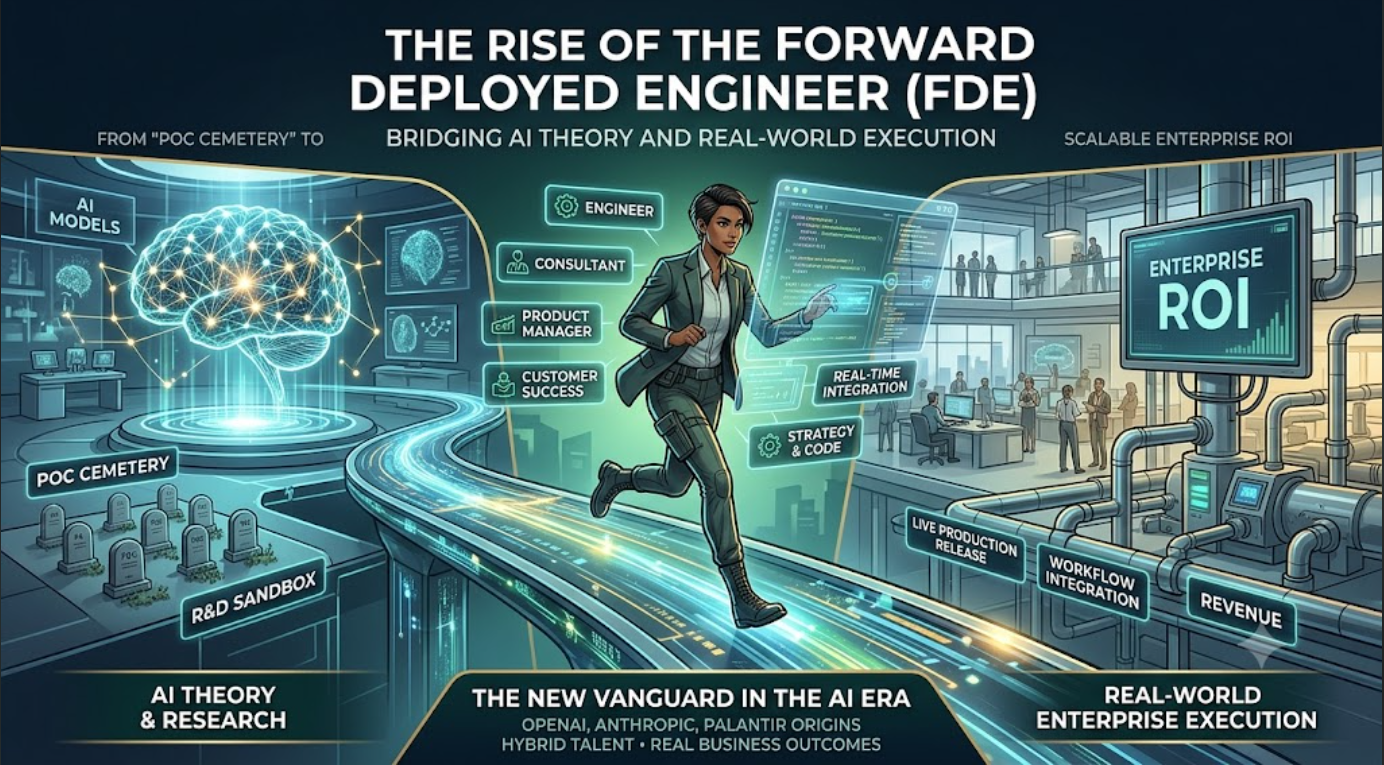

7. Where Startups Can Still Compete

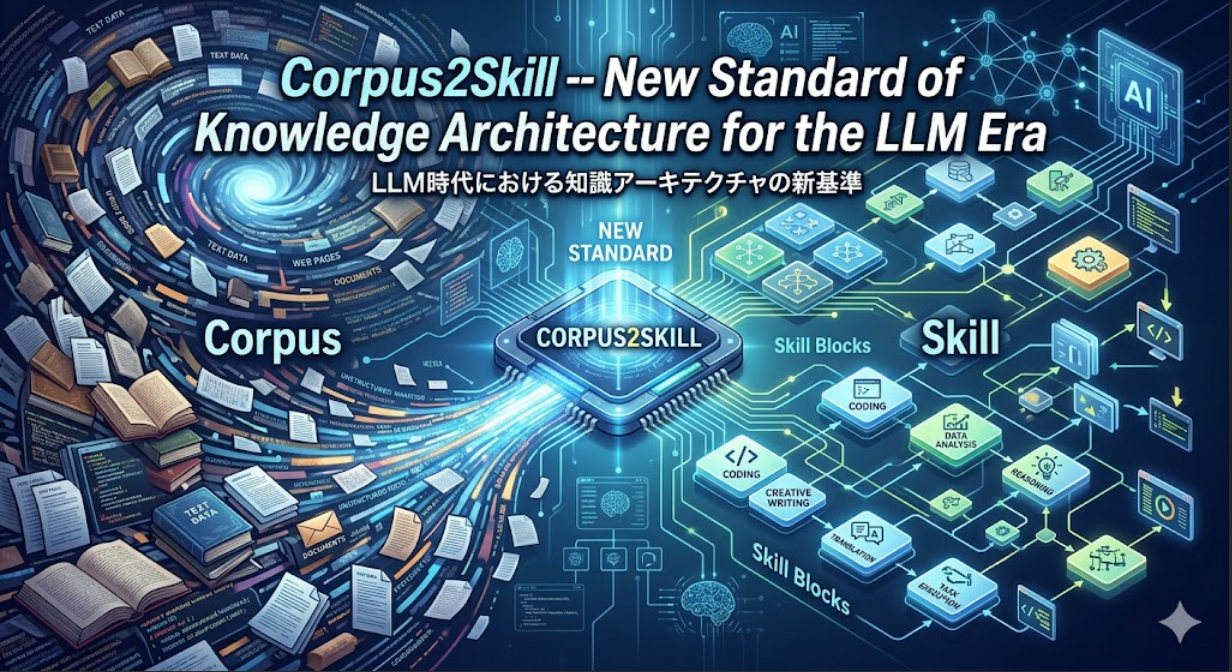

Within this reorganized landscape, the viable domain for startups becomes clearer. Competing on raw model scale or compute resources is unrealistic. Instead, opportunity lies in structuring human knowledge, reasoning, and organizational memory in ways that AI systems can leverage without monopolizing judgment itself. As local LLMs grow more capable, the relative value of structured knowledge, conceptual spaces, causal relationships, and decision histories increases.

Competition thus shifts from model performance to cognitive architecture. This represents a departure from the internet era, where content creation was subordinate to platform dominance. In the AI era, the decisive asset may be the ability to design and maintain structures of thought that persist independently of any single model.

8. Conclusion

What Nemotron 3 ultimately signals is not merely faster local inference, but the early stages of AI’s transformation from a centralized intelligence service into a distributed cognitive infrastructure. As with the internet, democratization and concentration will coexist. Yet unlike the internet, AI operates at the level of reasoning and judgment, where responsibility and contextual specificity impose natural limits on centralization.

The defining question of the AI era is therefore not who possesses the most powerful model, but who designs the structures within which intelligence—human and artificial—operates. The answer to that question will shape both the future of the AI industry and the distribution of power within it.