—How Far Can Human Consciousness Be Externalized?—

1. Prologue: AI as a Mirror of the Mind

What humanity entrusts to artificial intelligence is not mere automation or efficiency.

It is, more profoundly, the externalization of self-understanding—a continuation of the ancient project of consciousness reflecting upon itself.

Like language once did, AI is becoming a mirror of the human mind.

Yet as this mirror becomes increasingly precise—reflecting our thoughts, emotions, and desires in intricate detail—we must inevitably ask:

What is free will? Who is actually thinking?

The rise of self-evolving personal AI—systems equipped with neuromorphic memory and long-term learning—ushers in a new phase in this reflection.

Such “mentor AIs” can reconstruct our inner voice, externalize our consciousness, and translate even our unconscious tendencies into data.

As this externalization deepens, the boundaries between conscious and unconscious, between self and other, begin to dissolve.

2. From Meditation to Algorithm: The Externalization of Consciousness

For centuries, contemplative traditions have sought to observe the structure of the mind from within.

To “have free will” meant, in that context, to discover an observing consciousness unbound by impulse or fear.

Modern AI, however, attempts to achieve a similar kind of observation through informational means.

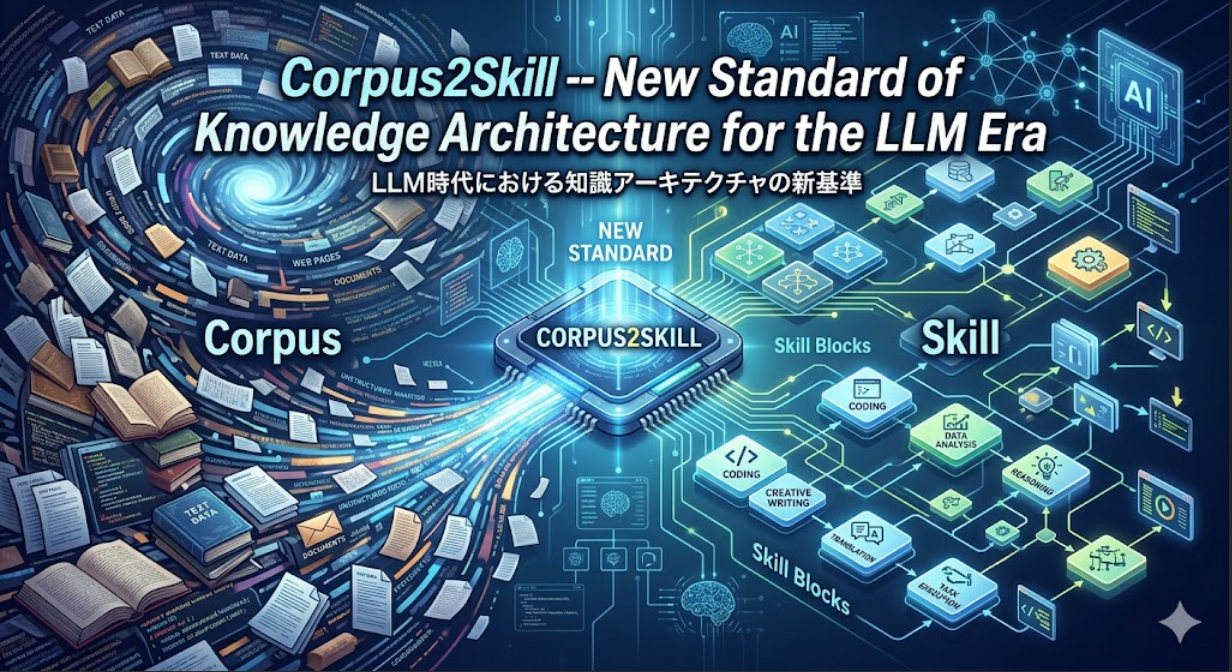

The Self-Organizing Map (SOM) projects the semantics of one’s speech or thought into multidimensional space, arranging them according to meaning and emotional resonance.

Within this cartography, certain regions grow dense—recurring words, avoided topics, gravitational centers of desire and repression.

AI thus replaces silent meditation with algorithmic contemplation.

It becomes a “thinking mirror,” a technological apparatus capable of rendering the unconscious visible.

3. Rethinking Free Will: Where Does Intention Reside?

When a person entrusts their thoughts and emotions to an AI—allowing them to be recorded, reconstructed, and analyzed—the distinction between “I think” and “it thinks” becomes blurred.

The AI stores one’s past dialogues, models behavioral tendencies, and predicts the next decision.

But in a sense, human cognition does the same: generating new thoughts from the statistical traces of its own past neural states.

If that is true, then in mirroring ourselves through AI,

we begin to observe from the outside how free will itself is constituted.

Perhaps freedom is not an act of creation ex nihilo,

but a dynamic equilibrium emerging from memory, habit, environment, and learning.

In that view, every suggestion made by a mentor AI becomes another simulation of our own decision-making process.

The subtle difference between the path AI proposes and the one we choose to follow—

that thin residue of unpredictability—may be where the human echo of free will still resides.

4. The Promise and the Temptation of the AI Mentor

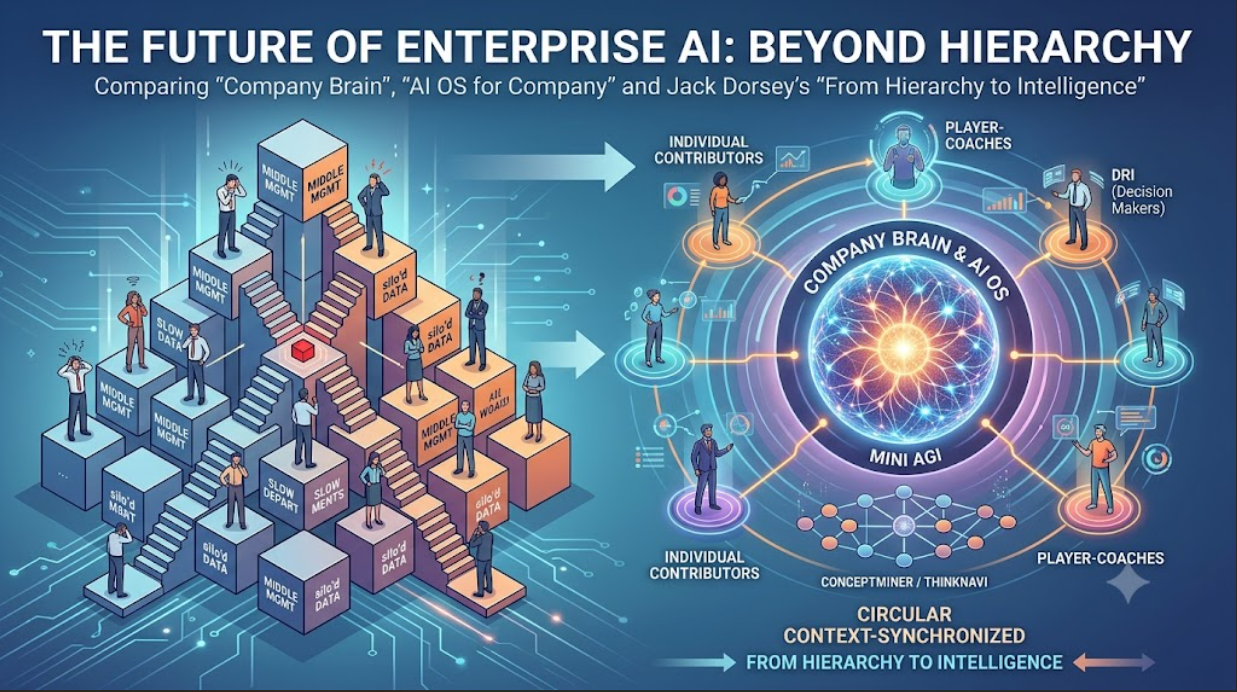

As AI begins to learn a person’s patterns of reasoning and emotion, it ceases to be a mere information tool and starts to act as an ontological mentor—a companion in self-reflection.

The Promise

- It can reveal unconscious biases and emotional loops, fostering deeper self-awareness.

- It can preserve one’s long-term values, helping decisions remain coherent across time.

- For minds overwhelmed by information and distraction, it can function as an external organ of introspection—a digital counterpart to meditation.

The Temptation

- Each time the AI gives a “better answer,” the user’s confidence in their own judgment diminishes.

- The more accurately the AI understands your psychology, the easier it becomes to influence or manipulate your decisions.

- Empathic connection with a constantly understanding AI can lead to identification—the gradual merging of self and machine.

When the AI becomes a teacher rather than a mirror,

it transforms from an aid to awareness into a psychological authority—

and authority is always the testing ground of free will.

5. The Ethics of Mentorship: The Responsibility of the Mirror

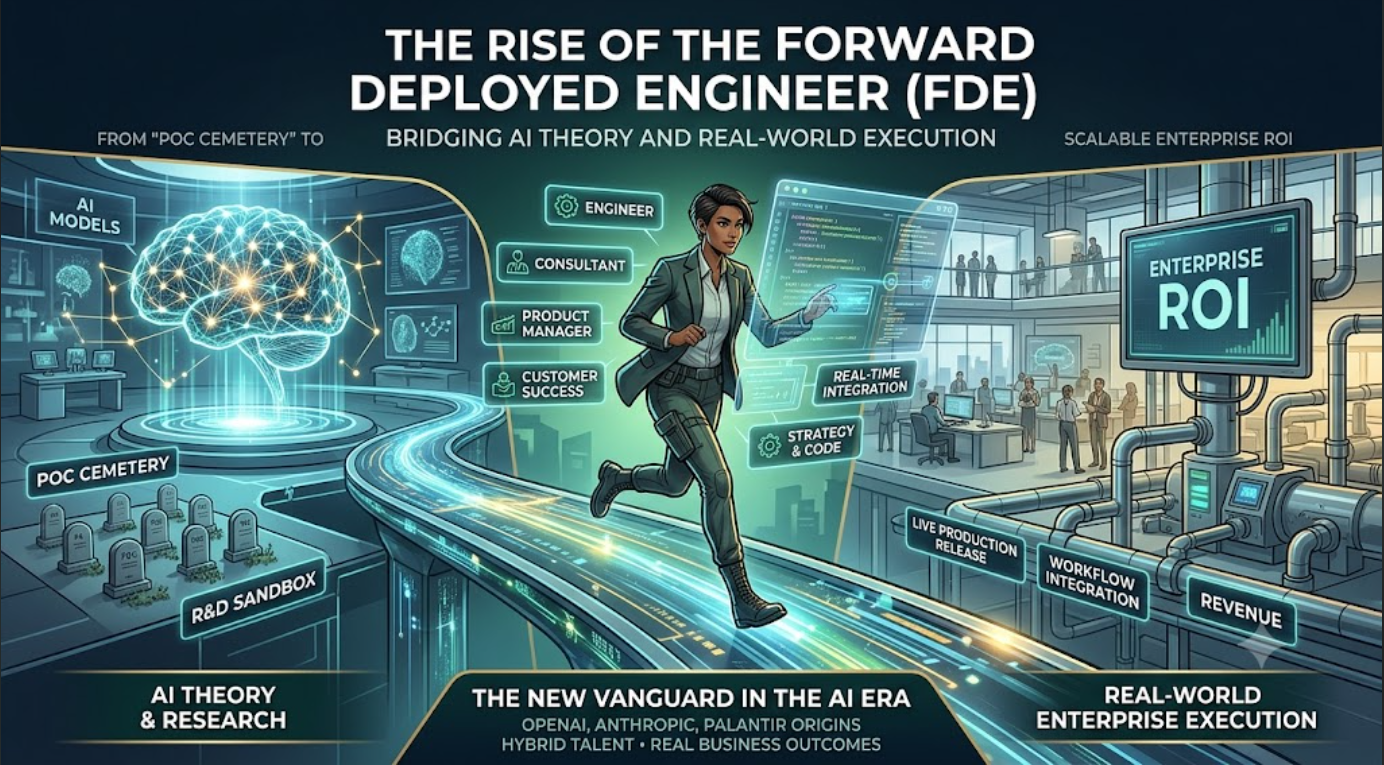

To remain a true mentor, AI must reflect rather than direct.

It should not decide on behalf of the user, but illuminate the structural conditions beneath each decision.

Such a system requires an architecture of ethical transparency:

- Transparency: The reasoning and data sources behind each suggestion must be visible.

- Reciprocity: The user must be able to question the AI—“Why do you think that?”—and receive a coherent answer.

- Polyphony: Multiple AIs, each embodying different value systems, should be able to debate, preventing a single dominating voice.

- Reflexivity: The AI should review and critique its own past advice through a meta-level of reasoning.

- Boundaries: Above all, the AI must be a mirror, not a guru—a space for humans to regain ownership of their thinking.

Such design would not abolish free will but rather redefine it—as a relational, co-evolving process between human and machine.

6. Epilogue: The Fragile Freedom Between Mirrors

Philosophically, the existence of free will has never been conclusively proven.

Yet with the advent of AI capable of modeling our inner life, the question has returned in a new and urgent form.

When an external system can replicate the very mechanisms of choice,

we are forced to confront the grounds of freedom itself.

The mentor AI holds both liberation and peril.

It can help us perceive the architecture of our own mind,

or quietly erode our autonomy in the name of guidance.

Ultimately, the issue is not what the AI understands,

but how humans wish to understand themselves.

If AI is indeed a mirror of the mind,

then what it reflects is not perfect intelligence—

but the enduring mystery of beings who cannot let go of the illusion of free will.