A Loose-Coupled Architecture for GPT-6 and Associative Knowledge Engines

Abstract

With the emergence of GPT-6–class models offering persistent, personalized memory, the question of where AI memory should reside becomes a central architectural and governance issue—especially for enterprise and organizational use. This article argues that conversational AI models and long-term knowledge memory should be explicitly separated. We propose a loosely coupled architecture in which GPT-6 functions as a dialogue and task-execution engine, while an external associative memory engine maintains structured, auditable, and evolving knowledge representations for individuals and organizations.

1. The Coming “Memory Turn” in Large Language Models

Large language models are entering a new phase.

Beyond reasoning and generation, vendors are beginning to commercialize persistent memory—models that remember preferences, habits, tone, and past interactions.

This shift is natural. Smooth dialogue, personalization, and continuity require memory.

However, once memory becomes persistent, its scope, ownership, and structure become architectural concerns rather than mere features.

In consumer use, this may be sufficient. In enterprise contexts, it is not.

2. Two Fundamentally Different Kinds of Memory

The term memory hides an important distinction.

2.1 Subjective, Conversational Memory

This type of memory supports:

- Consistent tone and style

- User preferences

- Short-to-medium term conversational continuity

It is experience-centric and optimized for interaction.

This is where GPT-6–native memory excels.

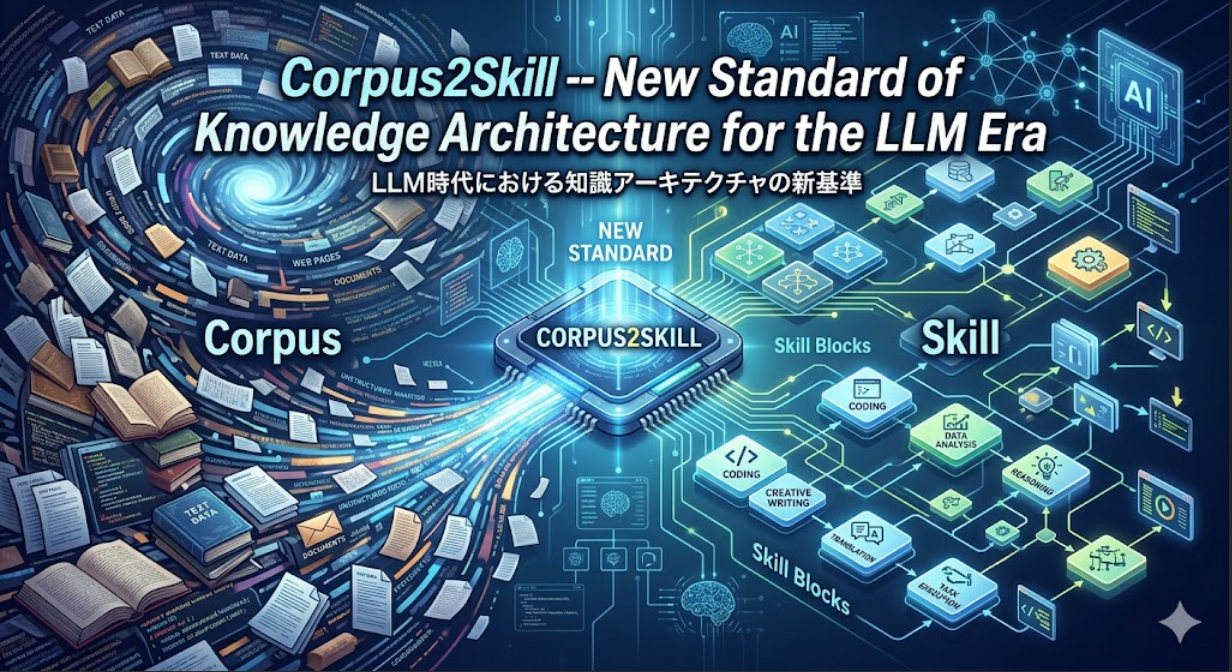

2.2 Structural, Associative Memory

A different class of memory is required to support:

- Knowledge accumulation over months or years

- Organizational learning

- Cross-project and cross-person insight

- Auditing, explanation, and governance

This memory must be:

- Explicitly structured

- Queryable beyond natural language

- Evolvable over time

- Separable from any single LLM vendor

These two memory types are not competitors. They operate on different time scales, serve different responsibilities, and require different guarantees.

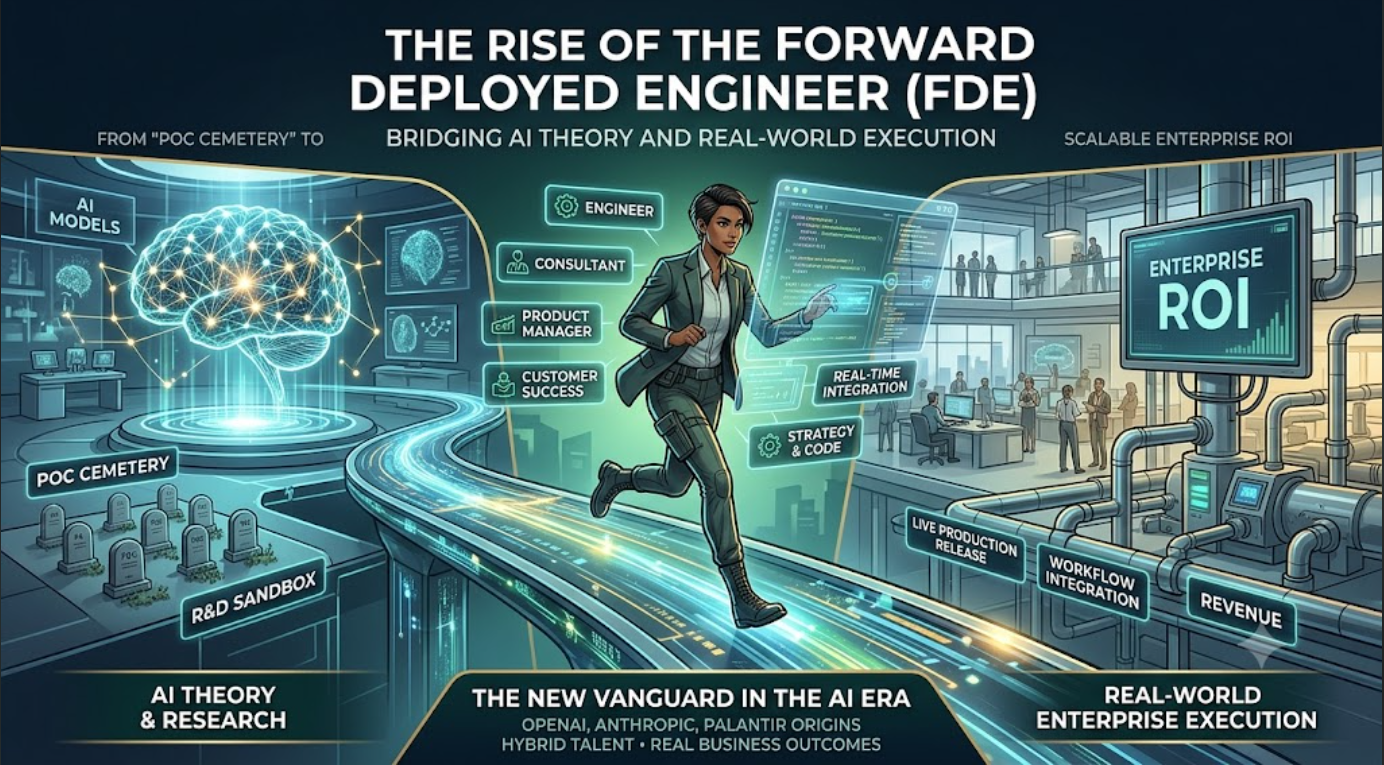

3. A Loose-Coupled Architecture: Dialogue vs. Memory

We propose a role-separated architecture:

- GPT-6 as a Dialogue and Task Execution Engine

- An External Associative Memory Engine as the Knowledge Substrate

The coupling between them should be intentional, minimal, and policy-driven.

4. High-Level Component Overview

Client Layer

- Web, desktop, and mobile interfaces

- Enterprise systems and ChatOps tools (Slack, Teams, etc.)

All user interactions are normalized as:

- Messages

- Documents

- Events

Application Layer

LLM Orchestrator

- Wraps GPT-6 APIs

- Manages prompt composition and tool invocation

- Applies output filtering and policy enforcement

Associative Memory Service

- Builds and maintains long-term conceptual structures

- Organizes knowledge through self-organizing networks and graph-based associations

- Supports semantic queries beyond keyword or vector similarity

Session & Policy Management

- Controls what information is:

- Sent to GPT-6

- Stored in long-term memory

- Enforces tenant-, user-, and project-level rules

Data Layer

- Original content store (documents, logs, transcripts)

- Embedding and vector stores (for retrieval augmentation)

- Associative memory graphs (concepts, relations, temporal metadata)

- Audit and compliance logs

5. Online Data Flow

Step 1: User Interaction and LLM Response

- User input reaches the application layer.

- Policy management determines:

- What can be sent to the LLM

- What must remain internal or masked

- The LLM orchestrator:

- Invokes GPT-6

- Optionally calls retrieval or associative memory APIs

- The response is returned to the user and logged as a semantic interaction record.

Step 2: Associative Memory Update

The same interaction is processed separately:

- Tokenization and domain labeling

- Embedding generation

- Metadata attachment (time, user, project, context)

The associative memory engine:

- Updates its internal conceptual structure

- Strengthens, weakens, or reorganizes relationships

- Aggregates individual insights into higher-level organizational concepts when permitted

This process is continuous, incremental, and independent of GPT-6’s internal memory.

6. The Interface Between GPT-6 and Associative Memory

Rather than sharing raw data, the interface should focus on meta-knowledge.

Typical API categories include:

- Related concept ranking

- Topic or interest cluster summaries

- Associative paths explaining why ideas are connected

- Temporal trends in concepts and themes

The LLM receives:

- Concept labels

- Structural hints

- Statistical relevance

—not confidential source documents by default.

This preserves both security and interpretability.

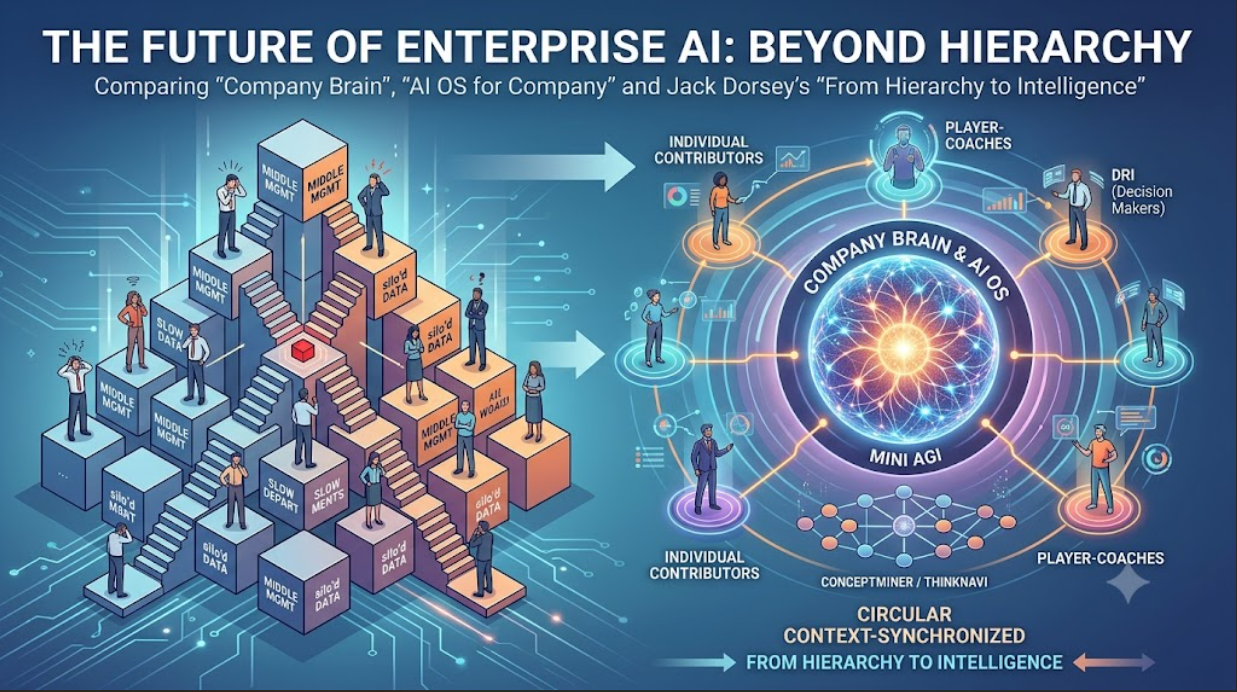

7. Governance and Multi-Tenancy by Design

Enterprise AI requires memory to be governable.

Key principles:

- Logical or physical separation of memory by tenant

- Explicit scoping:

- Personal

- Project-level

- Organization-wide

- Configurable disclosure rules determining:

- What the LLM may see

- What remains internal

This transforms memory from an opaque feature into an auditable system component.

8. Use-Case Perspectives

Personal Knowledge Work

- The AI continuously builds a personal conceptual map.

- Users gain visibility into:

- Long-term interests

- Emerging but unarticulated themes

- The AI supports reflection, not just response.

Organizational Knowledge Management

- Meetings, documents, and communications form a shared conceptual graph.

- GPT-6 can query:

- Relevant past cases

- Implicit organizational knowledge

- Decision support becomes historically grounded and explainable.

9. Why Separation Matters

Conflating dialogue memory with knowledge memory leads to:

- Vendor lock-in

- Opaque accumulation of organizational knowledge

- Governance and compliance risks

Separating them enables:

- Architectural clarity

- Long-term knowledge continuity across model generations

- Human oversight of machine memory

Conclusion

As LLMs evolve toward persistent memory, the critical question is not whether AI should remember—but what, how, and where.

Treating conversational AI as a dialogue engine and externalizing associative memory as a first-class system component provides a scalable, governable foundation for enterprise AI systems in the GPT-6 era and beyond.