Executive Summary

- Definition and Purpose: AI governance refers to the frameworks of rules, processes, and oversight that ensure AI systems are developed and used in a safe, ethical, and transparent manner. It aims to manage risks (e.g. bias, privacy breaches, misuse) and uphold principles like fairness, accountability, and human rightsibm.comiapp.org. By implementing AI governance, organizations can build trust and align AI outcomes with societal values while mitigating potential harmsibm.comiapp.org.

- Global and Japan Trends: Around the world, governments are advancing AI governance through both regulations and soft-law guidelines. Japan has taken a multi-tiered approach: adopting “Social Principles of Human-centric AI” (2019) as ethical baselines (e.g. human-centricity, fairness, transparency)meti.go.jp, and recently compiling AI Guidelines for Business (v1.0, 2024) to integrate earlier AI R&D and utilization guidelines into a unified risk-based frameworkmeti.go.jpgrjapan.com. Japan’s approach emphasizes voluntary compliance and alignment with global norms (OECD, G7 Hiroshima AI Process) while a new AI Promotion Act (2025) provides a strategic, non-binding blueprint to promote AI use responsibly (viewing AI as a strategic asset, encouraging transparency and risk mitigation through multi-stakeholder roles)ibanet.orgibanet.org. Globally, the EU AI Act (enacted 2024) introduces a comprehensive risk-tiered regulation – banning certain high-risk practices and imposing strict requirements on “high-risk” AI systems (including mandatory risk management, high-quality data governance, transparency, human oversight, and robustness tests)bakerdonelson.com. This EU framework, which carries hefty fines up to 7% of global turnover, will take full effect by 2026 and is pushing companies worldwide to institute AI risk controlsbakerdonelson.com. In the United States, while no single law governs AI yet, the NIST AI Risk Management Framework (2023) has emerged as a voluntary standard to guide organizations in integrating trustworthiness and risk mitigation across the AI lifecyclenist.gov. The U.S. also signaled future regulation via an October 2023 Executive Order requiring developers of the most advanced AI models to share safety test results with the governmenttranscend.io. In other parts of Asia, countries are setting their own strategies: Singapore released a Model AI Governance Framework (2019, updated 2020) emphasizing explainability and human-centric design, and launched “AI Verify” in 2022 – a first-of-its-kind toolkit for companies to test AI systems against 11 ethical principles (e.g. transparency, fairness, accountability) using technical auditspdpc.gov.sg. China, meanwhile, has woven AI governance into its national agenda (e.g. the New Generation AI Development Plan), instituting regulations on algorithms and generative AI that enforce content controls and security reviews – reflecting an approach that prioritizes state oversight and national competitiveness alongside ethical normstranscend.io.

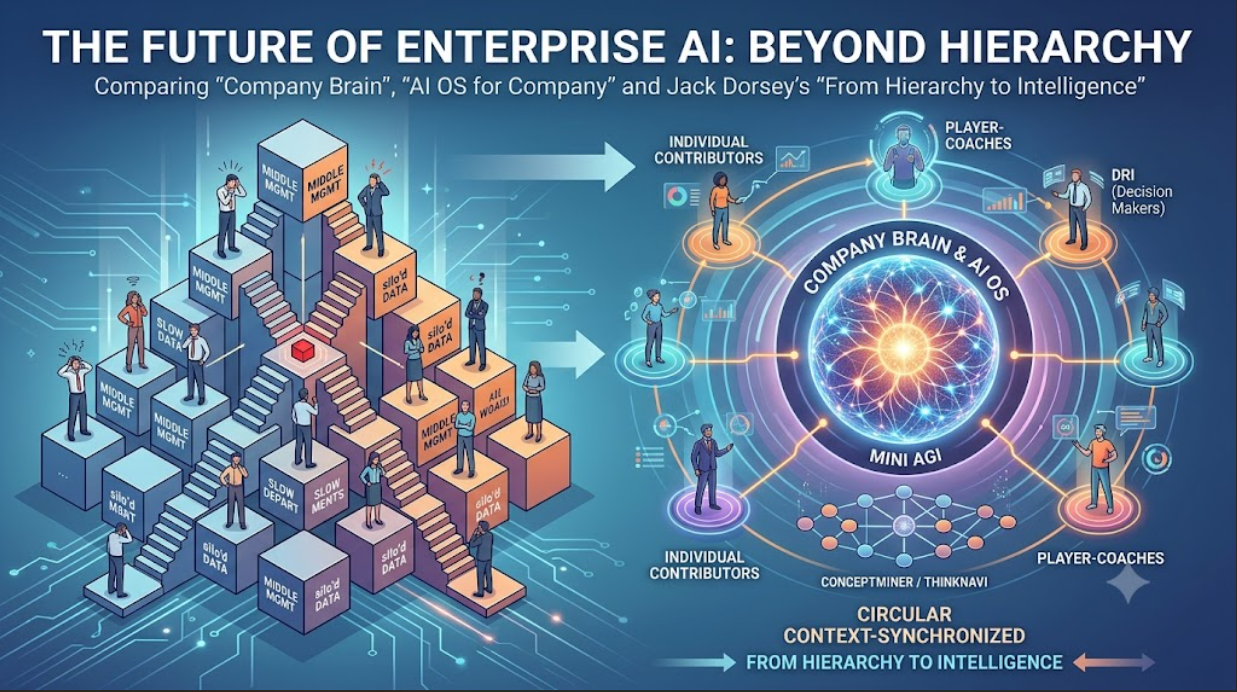

- Corporate Case Studies: Leading corporations have proactively built internal AI governance structures to operationalize responsible AI. Toyota (Toyota Motor North America) created a Responsible AI Organization – a cross-disciplinary AI governance board uniting experts from AI engineering, data, compliance, privacy, legal, and cybersecurity – to review and guide all AI initiativescdomagazine.tech. This board’s priorities include company-wide education on AI (e.g. how generative AI works), ensuring every AI deployment decision “stays above board” ethically, and rigorously vetting projects for customer benefit, safety and regulatory compliancecdomagazine.techcdomagazine.tech. Sony instituted a multi-layered AI governance system: it published Sony Group AI Ethics Guidelines in 2018 and then established an AI Ethics Committee in 2019 to oversee AI R&D and product use across its business unitssony.comsony.com. Sony’s internal AI Ethics Office (now the AI Governance Office) drives implementation of these principles, requiring that products with AI are evaluated for fairness and transparency early in developmentsony.comsony.com. By 2023-2024, Sony had also issued internal guidelines on generative AI use and a Global AI Governance Policy (2025) to ensure compliance with laws and consistent practices group-widesony.comsony.com. Hitachi likewise adopted its own “Principles for the Ethical Use of AI” (February 2021) to reduce AI’s risks and embed ethics in its Social Innovation Businesshitachihyoron.comhitachi.com. Hitachi set up governance mechanisms to enforce these principles as part of risk management, and continually updates its policies – for example, introducing internal generative AI usage guidelines in 2023 and extending them to external AI services by 2024hitachi.com. In the global tech sector, companies like Google and Microsoft have each formulated corporate AI principles and oversight processes. Google’s well-publicized AI Principles (2018) commit to socially beneficial AI applications and prohibit certain uses (e.g. weapons), stressing fairness, safety, privacy, and accountabilitytranscend.io. Google integrates these principles via internal review checkpoints for new AI research and products, and has teams dedicated to responsible AI (e.g. model audit and fairness teams). Microsoft, similarly, has championed “Responsible AI” by establishing guiding principles of fairness, transparency, privacy, security, inclusiveness, and accountabilitymicrosoft.commicrosoft.com. It stood up an internal AI Ethics Committee (AETHER) and an Office of Responsible AI to enforce its Responsible AI Standard (a detailed internal governance standard released publicly in 2022). This framework requires steps like impact assessments for AI systems, bias testing, documentation of model limitations, and human oversight for high-impact uses. Microsoft also developed toolkits (e.g. bias detection and interpretability tools) to help its engineers and customers build AI in line with these governance standardstranscend.io. IBM provides another best-practice example: it formed an AI Ethics Board in 2019 that reviews new AI products and research for alignment with IBM’s AI ethics principlesibm.com. IBM’s board – a cross-functional body including executives and experts – ensures accountability at the highest levels for issues like bias, explainability, and privacy in IBM’s AI offeringsibm.com. These case studies show that leading firms tend to create dedicated governance bodies (ethics committees or boards), promulgate clear AI ethical guidelines, establish internal review processes for AI projects, and invest in tools or techniques (such as bias audits, explainability methods, and model documentation protocols) to enforce responsible AI practice.

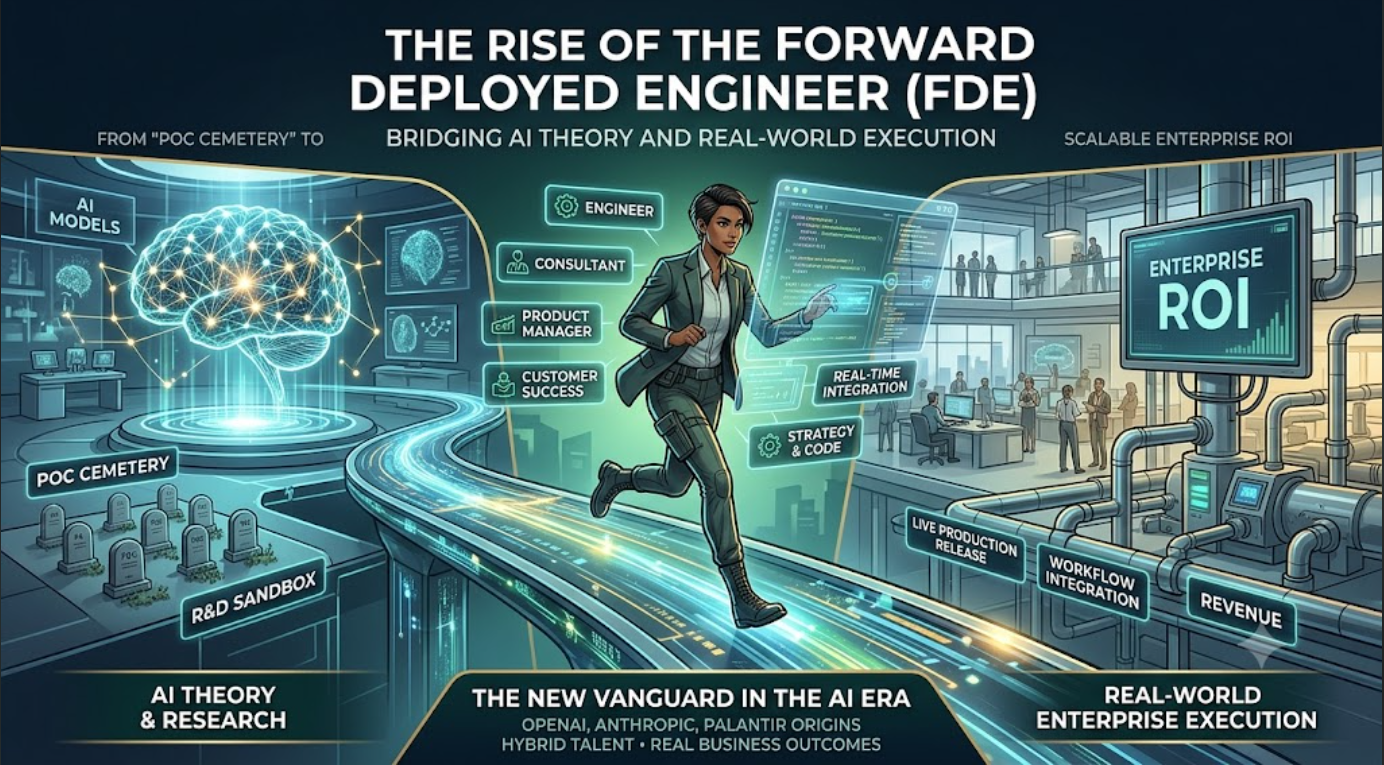

- Building an AI Governance Framework – Methodologies and Steps: Implementing AI governance in an organization involves a combination of structural measures, policies, and ongoing processes. Governance Structure: A crucial first step is to designate clear oversight for AI. Companies often form a cross-functional AI governance committee or board that includes stakeholders from IT/data science, legal, compliance, risk management, HR, and business leadershipfisherphillips.com. This committee defines the governance strategy, reviews major AI projects, and ensures accountability for AI outcomes is assigned to appropriate executives or teams (e.g. naming “AI product owners,” data stewards responsible for data quality, and algorithm auditors who check for performance and ethical issues)fisherphillips.com. Even smaller firms can assign an existing risk or ethics officer to oversee AI use. Policies and Principles: Organizations should craft a comprehensive internal AI policy or set of guidelines that align with broader ethical principles and legal requirements. This policy should state the company’s AI principles (e.g. fairness, transparency, privacy, safety) and establish rules and procedures for AI development and deploymentfisherphillips.comsony.com. For example, it may mandate that all AI models undergo bias testing, that high-risk AI applications get management sign-off, or that users are informed when AI is making decisions. A strong data governance program underpins AI governance – ensuring data quality, fairness, and privacy compliance – since “the more organized and clean the dataflows are, the better” for trustworthy AIiapp.org. Risk Assessment and Use-Case Inventory: Before scaling AI, companies should identify and categorize all AI use cases in the organizationiapp.org. For each application, assess potential risks (ethical, legal, or operational). Frameworks like NIST’s encourage mapping AI risks and impacts early in the project lifecycle. Many organizations perform AI impact assessments or similar checklists during development to evaluate an AI system’s intended purpose, data usage (and safeguards for that data), potential biases, and any regulatory or ethical considerationsfisherphillips.com. Prioritizing high-impact or “high-risk” AI applications ensures governance efforts focus where stakes are highest (akin to the risk-based approaches in EU/Japan guidelines). Processes and Tools: To operationalize governance, specific processes and technical tools should be put in place. Common best practices include: Bias and Fairness Audits – Regularly test AI models for unfair biases or disparate impacts, using metrics and bias-detection toolsfisherphillips.comfisherphillips.com. This may involve demanding that vendors provide evidence of diverse training data and requiring periodic re-testing of model outputs for fairness. Explainability & Transparency – Implement methods to make AI decisions explainable to stakeholders. For critical decisions (e.g. lending, hiring), define protocols to provide explanations or allow human review, ensuring AI decisions are “explainable and understandable” to affected users and auditorsfisherphillips.com. Human Oversight – Determine the appropriate level of human-in-the-loop for each AI systempdpc.gov.sg. High-risk AI should have human review or the ability to intervene if the AI output seems incorrect or harmful. Documentation – Maintain thorough documentation for AI systems: data sources, model versions, validation results, and decision rationalesfisherphillips.com. As one guideline puts it: “If it’s not in writing, it didn’t happen”fisherphillips.com, so recording the development process and key decisions is essential for accountability. Incident Response – Plan for AI failures or incidents. For instance, establish procedures to quickly address AI errors (such as a model causing customer harm or a major bias incident), including pulling the system from production if needed and informing stakeholders. Training and Awareness – Train employees and end-users on AI governance policies and AI basics. Regular (at least annual) training helps ensure that staff understand the company’s AI ethics standards and know how to escalate concernsfisherphillips.comfisherphillips.com. This is especially important for those developing or implementing AI, but general AI literacy across the workforce supports a culture of responsible AI use. Continuous Monitoring and Improvement: AI governance is not a one-time setup but an ongoing practice. Organizations should schedule periodic audits of AI systems and governance processes themselvesfisherphillips.com. This includes monitoring models in production for drift or emerging biases, reviewing whether governance controls (like approvals and checklists) are being followed, and keeping up to date with evolving best practices and regulationsfisherphillips.com. Many companies now integrate AI governance into their broader risk management or internal audit programs to ensure longevity. Notably, small and mid-sized enterprises (SMEs) can adopt these measures in a “right-sized” way. Experts note that AI governance need not mean creating big new departments or bureaucracy for SMEsiapp.org. Instead, the focus should be on embedding basic responsible AI practices into existing structures – for example, leveraging existing IT governance or compliance personnel to also cover AI, establishing “guardrails” and asking the right questions about AI use rather than developing exhaustive frameworks from scratchiapp.orgiapp.org. Scalable tools (like simpler checklists, or external frameworks such as Singapore’s AI Verify toolkit for testing models) can help resource-constrained firms implement governance. In summary, a practical step-by-step approach to building AI governance is: (1) Identify current and planned AI uses and their risks; (2) Define clear internal principles and policies for AI aligned with laws and ethics; (3) Set up a governance team or assign oversight roles; (4) Implement procedures for risk assessment, bias mitigation, documentation, and human oversight in the AI development lifecycle; (5) Educate and train your organization on these practices; and (6) Continuously monitor compliance and model performance, updating governance measures as needediapp.orgiapp.org. Following these steps helps ensure even smaller organizations “manage AI responsibly” within their means while scaling up as their AI utilization growsiapp.org.

- Future Outlook and Challenges: As AI technologies evolve rapidly, AI governance frameworks must continuously adapt. One key challenge is the pace of innovation outstripping regulations – the current landscape has been likened to a “Wild West” where rules are still catching uptranscend.io. This regulatory gap means many companies have relied on self-regulation and voluntary principles, which can lead to inconsistencies. However, more stringent laws are on the horizon (e.g. the EU AI Act’s upcoming requirements, and early moves in the US and Japan), so organizations will need to stay agile and update their governance programs to remain compliant across jurisdictions. Global regulatory fragmentation is another concern: differing definitions of “high-risk” AI or divergent standards (EU vs. US vs. Asia) could complicate compliance for multinational businesses. Efforts like the G7’s Hiroshima AI Process (with its 2024 code of conduct focusing on common principles like transparency, safety, and prevention of AI misuse) aim to harmonize governance approaches internationallygrjapan.com – if such global guidelines gain traction, they may ease the burden on companies by providing a more unified direction. Advances in AI capabilities – especially in generative AI and autonomous systems – present new ethical and risk management questions. For example, generative AI can produce misinformation or biased content at scale, raising concerns about accountability for AI-generated outputs and intellectual property ownership. A recent study found “80% of business leaders see AI explainability, ethics, bias or trust as major roadblocks to generative AI adoption”, underscoring that without robust governance, organizations may hesitate to fully deploy these new AI toolsiapp.org. Thus, future governance frameworks will likely put greater emphasis on “ethics by design” – embedding ethical considerations and safety checks right from the model design and data selection phaseiapp.org. We can also expect the emergence of new roles and practices: independent AI auditors or certification processes may become common to validate an AI system’s adherence to standards, much as financial audits are routine. Another challenge is ensuring transparency and auditability of complex AI (like deep learning neural networks). There is ongoing R&D into techniques for explainability and continuous monitoring (e.g. algorithmic “black box” audits) which will be crucial for governance as AI gets more advanced. Social and reputational considerations will continue to drive corporate AI governance as well – public scrutiny is high, and a major AI failure can cause significant brand damage. Companies will need to maintain public accountability (e.g. publishing responsible AI reports or model cards) to build stakeholder trust. Finally, achieving the right balance between innovation and control remains a core tension. Overly rigid governance could stifle beneficial AI innovation, while overly lenient approaches invite risks. The future likely lies in adaptive, risk-proportionate governance: frameworks that are strict for high-stakes AI (healthcare, finance, public safety) but more permissive for low-risk applications, combined with an organizational culture that encourages ethical reflection at every stage. In conclusion, AI governance is becoming an indispensable component of corporate strategy. Forward-looking organizations and governments are converging on best practices that blend ethical principles, risk management, and compliance. By learning from global trends and industry leaders – and by instituting clear structures, policies, and continuous oversight – companies can harness AI’s opportunities while upholding accountability and trust. The ongoing challenge will be to evolve these governance frameworks in tandem with AI’s rapid development, ensuring they remain effective and relevant in addressing tomorrow’s AI risks and innovationstranscend.iogrjapan.com.

1. Definition and Purpose of AI Governance

In a corporate context, AI governance refers to the established set of processes, policies, and organizational structures that guide how AI systems are developed and used, to ensure they align with ethical standards and regulatory requirements. It provides the “guardrails” that keep AI deployments safe, lawful, and beneficial. According to IBM, AI governance encompasses the “processes, standards and guardrails” that make sure AI tools are safe and ethical, directing AI development in ways that promote safety, fairness, and respect for human rightsibm.com. In practical terms, this means having oversight mechanisms that address the unique risks posed by AI – such as biased decision-making, lack of transparency, security vulnerabilities, or potential infringements on privacy – while still enabling innovationibm.comiapp.org.

The purpose of AI governance is multifold. First, it is about risk management: identifying and mitigating risks associated with AI throughout its lifecycle, from data collection and model training to deployment and monitoring. For example, governance measures help catch and correct biased outcomes or unsafe recommendations before they can cause harm. Second, it ensures ethical compliance and accountability: AI systems should uphold the organization’s values and broader societal values. Governance frameworks thus promote principles like fairness (avoiding unjust discrimination by AI), accountability (assigning human responsibility for AI decisions), transparency (making AI decision processes explainable), and privacy protectionfisherphillips.comfisherphillips.com. These principles often overlap with legal obligations, so AI governance also supports regulatory compliance (e.g. with data protection laws or sector-specific AI rules).

Another key purpose is to build trust – both internally (so that management and employees trust the AI outputs used in decision-making) and externally (so that customers, regulators, and the public trust the company’s AI products and services). Effective AI governance fosters trust by demonstrating that the company is proactively controlling AI’s impacts and ensuring AI is used in a socially responsible wayibm.comiapp.org. This in turn protects the company’s reputation and reduces the likelihood of scandals or liabilities stemming from AI misuse. As IBM’s perspective highlights, without proper governance, AI’s human-created flaws (biases, errors) can lead to discrimination or other harm, eroding public confidenceibm.comibm.com. Thus, governance provides a structured approach to “mitigate these potential risks” and align AI with ethical standards and societal expectationsibm.com.

In summary, AI governance in the corporate setting is about ensuring that AI initiatives are not just technically robust, but also ethically sound and legally compliant. It establishes accountability – for instance, through oversight boards or designated executives – and creates a culture where AI is deployed with caution and oversight, rather than recklessly. By balancing innovation with safeguards, AI governance aims to “help ensure AI systems do not violate human dignity or rights,” while enabling organizations to reap AI’s benefits responsiblyibm.comibm.com. This is increasingly seen as essential: as AI becomes integral to operations and strategy, companies recognize that unmanaged AI can pose major financial, legal, and reputational risksibm.com. Therefore, defining clear AI governance frameworks serves the purpose of guiding corporate AI use towards positive outcomes (efficiency, insight, customer value) and away from adverse outcomes (bias, accidents, compliance breaches).

2. International and Domestic Trends in AI Governance

2.1 Japan’s AI Governance Frameworks and Policies

Japan’s approach to AI governance has evolved through a series of national principles, guidelines, and recently legislation – emphasizing “soft law” guidance and industry self-regulation, coordinated with global norms. In March 2019, the Japanese government (Integrated Innovation Strategy Council) released the Social Principles of Human-Centric AI, a high-level ethical charter that set out core values for AI in societymeti.go.jpmeti.go.jp. These seven principles – including human-centricity, education & literacy, privacy protection, security, fair competition, fairness, accountability & transparency, and innovation – articulate Japan’s vision of trustworthy AImeti.go.jp. They notably align with the OECD AI Principles and were meant to guide both public and private sectors in Japanmeti.go.jp. Companies are expected to derive their own AI codes of conduct from these social principles, tailoring them to their business contextmeti.go.jpmeti.go.jp.

To operationalize those high-level ideals, Japan’s Ministry of Internal Affairs and Communications (MIC) and Ministry of Economy, Trade and Industry (METI) have issued non-binding yet influential guidelines for AI developers and users. METI in particular led the development of the AI Governance Guidelines for Implementation of AI Principles, first published as an interim version in 2019 and updated through version 1.1 by 2022meti.go.jp. These guidelines serve as a practical “guideline of guidelines,” consolidating typical AI governance practices and examples to help companies implement the abstract principles in day-to-day AI projectsmeti.go.jpmeti.go.jp. They recommend steps like conducting risk and impact assessments for AI, setting governance goals, establishing internal AI control systems, and ensuring accountability and transparency in AI operations. Though voluntary, they became widely referenced in Japan as a baseline for corporate AI governance, encouraging “multi-stakeholder” participation (developers, users, management) in keeping AI use ethicalmeti.go.jpmeti.go.jp.

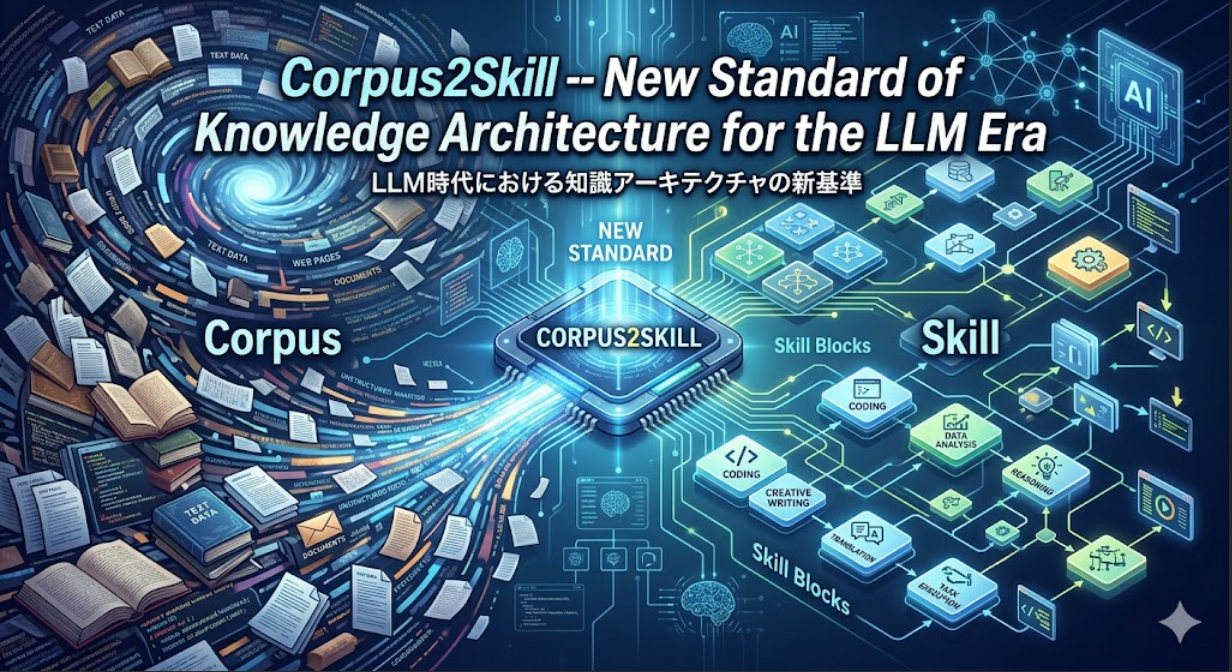

In response to the rapid rise of new AI technologies (especially generative AI in 2022–2023), the Japanese government decided to integrate and update its various AI guidance documents. In April 2024, METI and MIC jointly issued the AI Guidelines for Business Version 1.0, a comprehensive guideline targeting all AI business operatorsmeti.go.jp. This new guideline unified three prior documents – the AI R&D Guidelines (2017), AI Utilization Guidelines (2019), and METI’s AI Governance Guidelines (2022) – into a single resourcemeti.go.jp. The AI Guidelines for Business adopt a risk-based approach, meaning they urge companies to assess the potential impact of an AI system and apply stricter oversight to higher-risk AI applicationsgrjapan.com. For instance, if an AI could significantly affect human lives or rights, businesses should implement more rigorous risk mitigation (e.g. thorough testing, human oversight) than for a low-risk use casegrjapan.com. The 2024 Guidelines also explicitly address generative AI, given its rapid dissemination, and include checklist tools and case studies to be “user-friendly” for a broad range of companiesmeti.go.jpmeti.go.jp. Importantly, they emphasize alignment with international frameworks – noting consistency with the G7’s Hiroshima AI Process and OECD AI Principles – to ensure Japanese companies remain globally interoperable in their AI governance practicesgrjapan.com. The government has signaled these guidelines will be a living document, to be updated frequently as AI technology and societal expectations evolvemeti.go.jp.

On the legal front, Japan historically favored “governance by guidelines” over hard regulation for AI, to avoid stifling innovation. However, it recently enacted its first AI-specific law, the AI Promotion Act, in May 2025ibanet.org. Rather than imposing direct rules on AI use, this Act lays out a national strategy for AI development and trust. It defines AI broadly and enshrines four guiding principles: positioning AI as a strategic asset for societal and economic benefit, promoting AI use across industries, mitigating AI’s risks through transparency and accountability measures, and actively contributing to international AI governance normsibanet.org. The Act adopts a multi-stakeholder model – assigning responsibilities to government agencies, businesses, universities, and citizens to collaborate on AI promotion and risk managementibanet.org. Oversight is coordinated by a new AI Strategy Headquarters under the Prime Ministeribanet.org. Notably, the AI Promotion Act is mostly declarative and non-binding (a kind of “soft law”): it does not impose enforceable regulations on companies, but instead encourages voluntary compliance and signals future policy directionsibanet.org. This reflects Japan’s traditional approach of using “regulation by guidance” – relying on industry to self-regulate in line with government-issued principles – rather than immediate punitive lawsibanet.org. That said, the Act lays groundwork for potential stricter measures: it highlights transparency, safety, and alignment with international standards as key issues, hinting that ministries might later issue binding rules for high-risk AI sectors (like healthcare or critical infrastructure) under existing lawsibanet.org.

In addition to these, Japan’s sectoral regulators are adapting existing laws to AI. For example, the privacy regulator (PPC) warned that inputting personal data into generative AI could violate data protection law (APPI) if done without consent, and even issued a formal warning to OpenAI in 2023 to improve transparency and safeguardsibanet.orgibanet.org. Copyright laws were clarified to address AI training data usage, with a “General Understanding” issued in 2024 on how Japan’s Copyright Act exemptions apply to AI model trainingibanet.orgibanet.org. These interpretations make it easier for AI developers to train models (non-expressive data use is allowed) but set boundaries for fine-tuning on copyrighted stylesibanet.org.

Overall, Japan’s domestic trend is characterized by an agile governance philosophy: use guiding principles and updated guidelines to steer ethical AI, encourage companies to proactively self-govern, and only gradually move toward hard regulation in concert with global efforts. The government explicitly aims for international harmonization – for instance, Japan championed the G7 Hiroshima AI Process in 2023, which led to a joint G7 “Comprehensive AI Policy Framework” in April 2024 outlining shared principles and a code of conduct for AI developers among advanced economiesgrjapan.com. By aligning national guidelines with such international frameworks, Japan hopes to shape a “human-centric” AI governance model that can be accepted globallygrjapan.comgrjapan.com. For Japanese corporations, this means their AI governance is largely guided by non-binding yet authoritative documents (AI Guidelines for Business, etc.) and general laws (privacy, consumer protection), with an understanding that stricter oversight (especially for generative and other high-impact AI) is likely coming through refined guidelines or future regulations.

2.2 Global Developments: EU, US, and Other Regions

Globally, AI governance is a rapidly advancing field, with different jurisdictions taking distinctive approaches that reflect their legal systems and policy priorities. Notably, Europe has emerged as a front-runner in binding AI regulation with the proposed EU Artificial Intelligence Act (AI Act). The AI Act (approved by the European Parliament in 2023, with final adoption expected in 2024) is a landmark legislation that introduces a risk-based regulatory scheme for AI across all EU member statestranscend.io. It categorizes AI applications into tiers of risk: “unacceptable risk” AI (such as social scoring systems or real-time biometric surveillance in public) will be prohibited outright; “high-risk” AI (e.g. AI in medical devices, recruitment, loan approvals, driving autonomous vehicles) will be allowed but heavily regulated; and lower-risk AI will face lighter transparency or disclosure requirements. For high-risk AI systems, the Act sets extensive compliance obligations on providers and users of such systemstranscend.iobakerdonelson.com. These include establishing a thorough risk management system throughout the AI’s lifecycle, ensuring high-quality training data and sound data governance practices, keeping detailed technical documentation and logs for traceability, building in appropriate transparency and user instructions (so users know they are interacting with AI and understand its limitations), providing for adequate human oversight, and ensuring the AI’s accuracy, robustness and cybersecuritybakerdonelson.combakerdonelson.com. In essence, the EU is mandating what amounts to strict AI governance processes by law for high-risk AI – such as conducting bias testing, implementing controls to prevent harm, and undergoing a conformity assessment (a certification process) before an AI system can be marketedbakerdonelson.com. The Act also introduces accountability through penalties: companies can face fines up to 6% (Parliament proposes 7%) of global annual turnover for serious violations, exceeding even GDPR finesbakerdonelson.com. Although the AI Act will only start to apply 2 years after entry into force (likely in 2026)bakerdonelson.com, it is already influencing corporate behavior worldwide – especially for any company that operates or sells products in Europe. Businesses are now preparing inventories of their AI systems and assessing which might be deemed “high-risk” under the EU definitionsbakerdonelson.com, since such systems will require compliance steps like external audits or adjustments to meet the EU’s criteria. The EU AI Act is widely seen as a “trend-setter” that could become a global benchmark (similar to how GDPR influenced global privacy practices)bakerdonelson.com. It has also spurred discussions on international coordination: for instance, the Act contemplates codes of conduct for AI not covered by the high-risk rules, and the EU is engaging with partners on aligning AI standards.

In the United States, there is no overarching federal AI law equivalent to the EU’s approach yet. The U.S. has so far favored a combination of guidance, standards, and sector-specific or state-level rules. A central development has been the NIST AI Risk Management Framework (AI RMF), which was released as version 1.0 in January 2023 after extensive consultationnist.gov. The NIST AI RMF is a voluntary framework intended to help organizations in any industry “incorporate trustworthiness considerations into the design, development, use, and evaluation” of AI systemsnist.gov. It defines four core functions – Govern, Map, Measure, Manage – that organizations should continually perform. In summary: Govern refers to establishing organizational governance processes around AI (culture, policies, roles, and oversight to manage AI risk)hyperproof.io; Map means understanding and contextualizing the AI system and its potential risks; Measure involves analyzing and assessing AI risks (e.g. testing for bias, security vulnerabilities, etc.); and Manage means taking actions to mitigate risks and regularly monitoring AI performance. The AI RMF has quickly gained traction as a baseline in the U.S., with many companies using it as a blueprint for their internal AI governance, and it aligns with principles from OECD and others. Notably, the White House has also issued policy guidance like the “Blueprint for an AI Bill of Rights” (Oct 2022) – which outlines aspirational rights such as protection from unsafe or biased AI systems and notice when AI is in use – though this is not law, it signals expectations to industry. In October 2023, U.S. President Biden took a more concrete step by signing a sweeping Executive Order on Safe, Secure, and Trustworthy AI. This EO directs various federal agencies to set standards for AI safety and security and, importantly for corporations, requires developers of the most advanced foundation models (above a certain capability threshold) to notify the government and share the results of safety tests (red-teaming, etc.) before deploymenttranscend.io. While enforceability is via existing defense or security laws, this marks the first U.S. federal action mandating some private AI risk disclosures. Additionally, some U.S. states have begun to pass their own AI laws (for example, laws in Colorado and Illinois on AI hiring tools, or a new California law creating an Office of AI). The trend in the U.S. is a patchwork of guidelines and emerging regulations, often focused on specific concerns like bias in employment or transparency in using AI for consumers. We also see significant self-regulation by companies (which will be detailed in section 3) – many big tech firms (Microsoft, Google, IBM, Meta, etc.) are creating internal standards that sometimes go beyond legal requirements, in an effort to preempt stricter laws and address stakeholder concernstranscend.iotranscend.io.

Other regions are also shaping AI governance in notable ways. In Europe beyond the EU, the UK is pursuing a sector-based, principles-driven approach (eschewing one omnibus AI law for now), and has issued guidance via its AI Regulation White Paper (March 2023) emphasizing innovation-friendly, flexible regulation through existing regulators. The UK also hosted an AI Safety Summit in November 2023 to coordinate international efforts especially on frontier AI risks. Canada has a proposed Artificial Intelligence and Data Act (AIDA) that would introduce some algorithm transparency and risk management requirements, currently under legislative debate. In Asia-Pacific, aside from Japan (covered above) and China, countries like Singapore have been pioneers in AI governance. Singapore’s Personal Data Protection Commission (PDPC) released the Model AI Governance Framework as early as 2019 (with a second edition in 2020), which provides detailed guidelines and examples for organizations to implement responsible AIpdpc.gov.sg. It covers areas such as internal governance structures (calling for clear roles, risk assessment processes, and staff training on AI)pdpc.gov.sgpdpc.gov.sg, determining the appropriate level of human involvement in AI decisions, and operational management like bias mitigation and robustness checkspdpc.gov.sg. Singapore also set up AI Verify, an AI governance testing framework and toolkit, as a practical instrument: it allows companies to run standard tests on their AI models for metrics related to key principles (e.g. fairness, explainability, robustness) and generate reports to demonstrate their AI’s trustworthinesspdpc.gov.sgpdpc.gov.sg. This was piloted with industry partners globally (like IBM, Microsoft, Google, financial institutions) and in 2023 Singapore even open-sourced this toolkit via the AI Verify Foundationpdpc.gov.sgpdpc.gov.sg. These initiatives position Singapore as a “neutral ground” for AI governance experimentation and reflect a philosophy of enabling AI innovation with guardrails rather than after the fact. China’s approach stands in contrast in some ways: the Chinese government has rolled out a series of regulations targeting specific AI issues – for instance, the Regulations on Algorithmic Recommendation Services (effective March 2022) which require online platforms to disclose the use of personalization algorithms and prohibit algorithmic activities that endanger national security or social order. In 2023, China’s Cyberspace Administration issued new rules for generative AI services, requiring providers to conduct security assessments, align content with core socialist values, prevent false information, and if necessary, register their algorithms with authorities. These rules put more direct responsibility on companies to control AI outputs. They tie into China’s overarching policy, the New Generation AI Development Plan (2017), which explicitly mentions cultivating an AI governance framework with ethical norms, but within a model where the state plays a strong supervising roletranscend.io. Chinese companies thus face some of the most specific AI mandates to date, although enforcement is evolving.

On the international stage, there is growing collaboration to set global AI governance standards. The OECD’s AI Principles (2019), which stress values like transparency, fairness, accountability and were the first intergovernmental AI ethics agreement, have been endorsed by 50+ countries (including the US, EU, Japan, etc.)transcend.io. Building on that, the OECD launched an AI Policy Observatory and in 2023 created a framework to monitor the implementation of the G7 Hiroshima AI Process code of conducttranscend.io. The United Nations has also become active: UNESCO passed a Recommendation on the Ethics of AI (2021) urging member states to implement ethical impact assessments and oversight bodies for AI. In 2023 the UN Secretary-General proposed establishing a global AI regulatory body (analogous to the International Atomic Energy Agency) to oversee very advanced AI. While such ideas are nascent, they indicate momentum towards some form of global governance mechanism for AI in the future.

In summary, global trends in AI governance show a movement from voluntary principles to more concrete regulations, especially for higher-risk AI. The EU’s AI Act stands out as a stringent, comprehensive law setting a possible template. The US is taking a mixture of standards-based and targeted regulatory steps, with a strong role for industry self-governance in the meantime. Many countries in Asia are proactively issuing guidelines to encourage “Responsible AI” practices (often aligning with OECD/G7 principles) and a few, like China, are actively regulating certain AI behaviors to address immediate societal concerns. This dynamic environment means companies operating internationally must keep abreast of multiple evolving requirements – from complying with explicit rules (like the EU’s forthcoming compliance for high-risk AI systems) to following best-practice frameworks (like NIST or Singapore’s) to meet stakeholder expectations where laws lag. The convergence on key themes – risk-based control, transparency, human oversight, accountability – across these jurisdictions is notable, suggesting a gradually solidifying consensus on what responsible AI entails, even if the enforcement mechanisms differ.

3. Corporate Case Studies: AI Governance in Practice

To understand how AI governance frameworks are implemented, it is instructive to look at several leading companies that have been at the forefront of establishing and operationalizing AI governance. These case studies span Japanese industrial giants and global tech companies, illustrating both common best practices and variations in approach.

Toyota – as a manufacturing and mobility company – has been incorporating advanced AI in areas like autonomous driving, manufacturing automation, and consumer services. Recognizing the risks and responsibilities that come with AI (especially after the emergence of powerful generative AI), Toyota Motor North America (TMNA) took a notable step by setting up a “Responsible AI Organization” in 2023cdomagazine.tech. This is essentially a multi-disciplinary AI governance committee or review board. It convenes senior leaders and experts from various domains: enterprise AI and data science teams, compliance and legal, privacy, and cybersecurity departmentscdomagazine.tech. By bringing together these diverse perspectives, Toyota ensures that any AI initiative is examined holistically – not just for technical feasibility, but also for legal/ethical implications and security. The Responsible AI Organization’s mandate covers three key prioritiescdomagazine.tech: (1) Education – It spearheads internal education about AI, ensuring that teams across the company understand what technologies like generative AI can and cannot do. This awareness-building is critical so that end-users and managers have realistic expectations and can identify misuse (Toyota explicitly wanted to demystify GenAI for employees)cdomagazine.tech. (2) Ethical Oversight – The board reviews plans and decisions around AI implementation to ensure they stay “above the board” ethicallycdomagazine.tech. In practice, that means scrutinizing AI use cases for alignment with Toyota’s values and ethical guidelines (for instance, making sure an AI feature prioritizes safety and does not unfairly disadvantage any customer group). If a proposed AI application raises red flags (say, potential bias in an AI-driven customer service tool), the board can recommend adjustments or even veto it until concerns are resolved. (3) Customer Trust and Benefit – Toyota frames its AI goal as making sure “the data works for our customers and that AI is used in the most responsible and respectful way possible.”cdomagazine.tech. Concretely, the governance board examines whether each AI or data initiative directly benefits customers and respects their rights (including compliance with regulations like privacy and obtaining explicit customer consent where needed)cdomagazine.tech. This focus on customer-centric AI aligns with Toyota’s brand promise of quality and safety. According to Toyota’s Chief Data Officer, the formation of this Responsible AI Organization was “paramount in bringing it all together” – integrating AI efforts with Toyota’s longstanding culture of quality, safety, and continuous improvementcdomagazine.tech. In effect, Toyota leveraged its existing strengths in governance (such as its rigorous safety review processes in manufacturing) and extended them to AI, creating a formal structure to vet AI as carefully as any physical vehicle component.

Sony provides another illustrative example, especially in how a traditional consumer electronics and entertainment conglomerate can implement AI ethics across diverse business units (from gaming AI to image recognition to financial services AI). Sony recognized early the need for AI ethics; in 2018 Sony issued the Sony Group AI Ethics Guidelines, a public document stating principles for “how all Sony officers and employees should utilize AI and conduct AI-related R&D” in harmony with societysony.com. These guidelines include commitments to Fairness, Transparency, Privacy Protection, Accountability, and an interesting principle of “the Evolution of AI and Ongoing Education,” which acknowledges AI tech will change and Sony must continually educate its people and update its policiessony.com. To enforce these principles, Sony in 2019 established an internal Sony Group AI Ethics Committeesony.com. This committee comprises executives from different backgrounds (likely including technical R&D leads, legal, perhaps business unit heads) and it “checks and reviews Sony’s use of AI and related R&D from societal and ethical perspectives”sony.com. In practice, when a Sony division is developing a new AI-powered product or service (say an AI feature in a camera or an entertainment recommendation algorithm), the Ethics Committee reviews it for compliance with the Sony AI Ethics Guidelines. They ensure, for example, that the product has been evaluated for fairness (no undue bias in an AI music recommendation system), transparency (users are informed of AI use in a robot toy), and security. The Committee has authority to demand modifications or impose conditions so that activities stay within ethical boundssony.com. Sony also created an operational arm for these efforts: in 2021 it set up an AI Ethics Office within Sony Group Corp, which was later renamed the AI Governance Officesony.com. This office develops internal procedures, training, and tools for business units to implement the AI Ethics Guidelines. For instance, one initiative from this office was to integrate ethics checks into the product commercialization process – Sony reports that “from early in the commercialization process, elements such as fairness and transparency are evaluated based on pre-defined requirements to confirm appropriate measures are implemented” for products with AIsony.com. This means Sony has checklists or testing protocols that engineers must go through as they design AI features, ensuring issues are caught pre-launch. In response to new challenges like generative AI, Sony in 2023-2024 introduced additional internal guidelines governing employees’ use of generative AI tools (to prevent data leaks or unethical content generation)sony.comsony.com. And by 2025, Sony established a Global AI Governance Policy that sets a unified approach to comply with laws and internal rules across all its subsidiaries worldwidesony.com. Sony’s multi-layered governance structure – guidelines, Ethics Committee, Governance Office, and periodic policy updates – illustrates a comprehensive corporate governance model. It emphasizes executive oversight (the Ethics Committee reports to top management), cross-company integration of AI ethics (policies applied group-wide, not just in silos), and continual adaptation (e.g. updating policies for new tech).

Hitachi, a Japanese multinational known for infrastructure, finance, and IT services, has also proactively articulated AI governance practices. Hitachi views trustworthy AI as crucial to its “Social Innovation Business” strategy (delivering solutions in smart cities, healthcare, etc., that rely on AI). In February 2021, Hitachi published its Guiding Principles for the Ethical Use of AIhitachi.com. These principles (seven in total, similar to many AI ethics sets with values like privacy, security, fairness, transparency, etc.) serve to “reduce the risks posed by AI and utilize it while maintaining safety and security”hitachihyoron.com. They are meant to be followed in all AI-related R&D and deployments. Importantly, Hitachi coupled these principles with efforts to “establish AI governance mechanisms to manage risks from the perspective of AI ethics.”hitachi.com. This indicates that Hitachi didn’t stop at issuing principles; it worked on institutional processes to implement them. For example, Hitachi might have integrated ethical risk checkpoints in its project management and risk management meetings. Indeed, Hitachi’s Integrated Report 2024 mentions that as part of strengthening overall risk management, the company incorporated AI governance in its group-wide risk assessments and set common rules under a Group Governance Policyhitachi.comhitachi.com. Hitachi also stays responsive to new AI trends: it developed internal guidelines for generative AI use in August 2023, when tools like ChatGPT started proliferating in business, to ensure employees use such tools securely and ethicallyhitachi.com. Then in March 2024, it expanded those guidelines to cover providing generative AI services externally (likely addressing issues like content safety, IP, etc., in AI features for customers)hitachi.com. This continuous evolution shows Hitachi’s commitment to keeping its AI governance current. Organizationally, Hitachi’s approach appears to embed AI governance into existing structures (the Chief Risk Management Officer and committees) rather than a standalone AI ethics board – showing that for some companies, integrating AI governance into enterprise risk management and compliance systems is an effective strategy.

Turning to U.S. tech companies, Google and Microsoft have been very prominent in the AI governance discussion, both because they are front-runners in AI innovation and because they have faced public scrutiny around AI ethics (e.g. concerns about bias in Google’s services or Microsoft’s Tay chatbot incident).

At Google, a major inflection point was the 2018 fallout from an AI defense contract (Project Maven) and employee protests, which led Google to draft its AI Principles. These seven principles, announced by CEO Sundar Pichai in June 2018, include pledges such as: AI should be socially beneficial, avoid creating bias or reinforcing unfair bias, be built and tested for safety, be accountable to people, incorporate privacy design, uphold high standards of scientific excellence, and be made available for uses that accord with these principles. Google also listed applications it will not pursue (e.g. weapons, unlawful surveillance)transcend.io. These principles serve as Google’s moral compass for AI development. The challenge for Google has been implementing them across a huge organization. Google established internal review processes like the Responsible Innovation team and review committees for sensitive projects. For instance, certain AI research at Google now goes through an Ethical review before publication (a policy that generated controversy with the dismissal of some AI ethics researchers in 2020). Google reportedly has a cross-functional AI Ethics review board internally (not public like an external board, but an internal governance structure) that evaluates high-risk AI product plans for alignment with the AI Principles. One known mechanism is the “AI Principles Review” process that product teams must engage in for sensitive uses (e.g. cloud AI services that might be used in surveillance). Additionally, Google invested in technical tools for governance, like developing AI model cards (transparency documentation) and fairness toolkits (the What-If Tool, etc.) to help identify biases. In 2022, Google centralised some of these efforts under a Responsible AI and Human-Centered Technology unit, and it publishes an annual AI Impact Report detailing progress on responsible AIpublicpolicy.google. Despite some setbacks (Google attempted to form an external Advanced Technology Ethics Advisory Council in 2019 but dissolved it under criticism of member selection), Google continues to adjust its governance. For example, with the launch of its Bard generative AI and other products, Google convened ethics and legal teams to create guardrails (like Bard has content filters, and Google has an internal red-teaming group to test AI models for misuse). Thus, Google’s case highlights a principle-driven approach supplemented by internal oversight committees and a variety of process interventions to uphold those principles.

Microsoft has woven AI governance deeply into its corporate governance structure, leveraging lessons from early AI issues (like the 2016 Tay chatbot which learned to produce offensive tweets – a failure that spurred Microsoft to action on AI ethics). Microsoft adopted a set of Responsible AI Principles around 2017, focusing on fairness, reliability & safety, privacy & security, inclusiveness, transparency, and accountabilitymicrosoft.commicrosoft.com. To ensure these principles are more than slogans, Microsoft set up multiple layers: an AI governance structure consisting of the AETHER Committee and the Office of Responsible AI (ORA), among others. The AETHER Committee (standing for AI and Ethics in Engineering and Research) is a high-level internal committee with senior researchers and department heads that advises on hard ethical challenges and reviews sensitive use cases. They identify issues (for instance, the AETHER Committee reportedly influenced Microsoft’s decision to restrict certain facial recognition technology sales due to bias concerns). The Office of Responsible AI, on the other hand, is a corporate function that develops rules, training, and tools to enact the AI principles across the company. In 2022, Microsoft publicly released its internal Responsible AI Standard (v2)it1.comblogs.microsoft.com, a detailed document that translates principles into concrete requirements for product teams. For example, it requires teams to perform an Impact Assessment early in development of an AI system, to categorize the system’s risk (e.g. is it a consequential decision system affecting people’s livelihood?), and then follow appropriate controls. The Standard also mandates things like transparency documentation (every AI system must have a transparency note for customers or users explaining its capabilities and limitationsverityai.co), ensuring human oversight for certain AI decisions, and even foreseeing misuse (teams must think about how their AI could be misused and plan mitigations)unece.org. Microsoft backs this up with internal training – e.g., an online Responsible AI curriculum for engineers – and a network of Responsible AI Champs in different product teams to act as liaisons. Additionally, Microsoft has developed and open-sourced several responsible AI tools (such as FairLearn for bias mitigation, InterpretML for explainability, and the Azure Machine Learning Responsible AI dashboard)transcend.io. These help teams test their models for fairness, interpret model behavior, etc., enforcing governance in the software development workflow. Moreover, Microsoft actively involves top leadership: a weekly Responsible AI Council with members of the Senior Leadership Team was in place to track major issues. (It’s worth noting Microsoft did face a reorganization in 2023 where it laid off some ethics and society team members to consolidate resources, but it stated the commitment to Responsible AI governance remains, anchored by the ORA and principle-based rules). Microsoft’s case exemplifies a very formalized AI governance program embedded at all levels of the company – policy (principles/standard), people (committees, champions, ORA staff), and technology (tools, checklists). It treats AI governance similarly to how companies treat security or privacy governance – with defined standards and oversight offices.

IBM provides a different perspective as both a developer of AI (e.g. IBM Watson) and a seller of AI solutions to enterprises. IBM’s leadership in AI ethics has been notable. It co-chaired the drafting of the OECD AI Principles and has been vocal about “ethical AI is good for business.” IBM in 2018 published its Principles for Trust and Transparency, and in 2019 it formalized an internal AI Ethics Boardibm.com. This board includes IBM’s global chief privacy officer, AI researchers, legal experts, and business leaders. The AI Ethics Board reviews IBM’s product pipeline and research to ensure alignment with IBM’s principles (which cover accountability, explainability, fairness, and values alignment). For example, when IBM was developing an AI product for HR, the Ethics Board would evaluate it for bias or discriminatory impact. IBM’s Board also sets policies – one outcome was IBM deciding to stop offering general-purpose facial recognition software in 2020 due to bias and privacy concerns, a move explicitly tied to its ethical stance. IBM has also integrated ethics into its product development via IBM Watson’s OpenScale tools that track AI decisions and bias in real time, and via releasing open-source toolkits (AI Fairness 360, AI Explainability 360, Adversarial Robustness 360) to help the industry collectively tackle AI governance challengestranscend.io. IBM’s emphasis is often on trustworthy AI – ensuring AI is explainable and provable. The IBM AI Ethics Board’s existence sends a strong signal of top-down accountability: it reports to senior leadership and requires each division to consider ethics. IBM also requires ethics training for AI developers and has an ethics evaluation process in its AI development methodology.

These case studies, despite spanning different industries and cultures, reveal common threads in corporate AI governance best practices:

- Senior Leadership Involvement: All these companies involve top executives or dedicated committees (often reporting to the C-suite) to oversee AI ethics, signaling that AI governance is a board- and CEO-level priorityibm.comsony.com. For example, Sony’s committee of executives or Microsoft’s SR. Leadership team council ensure leadership is accountable.

- Formal Principles or Guidelines: Each has documented AI principles or policies that set the expected norms (Google’s AI Principles, Sony/Hitachi guidelines, Microsoft/IBM principles). These create a shared language in the organization about what responsible AI means.

- Cross-Functional Teams: AI governance is never left to just the engineers or just legal; it’s inherently interdisciplinary. Toyota’s and Google’s inclusion of diverse roles (legal, compliance, technical, etc.) in reviews is typicalcdomagazine.techtranscend.io. This ensures well-rounded oversight.

- Processes for Review and Approval: There are workflows in place – whether it’s an AI Ethics Board review before product launch, or an internal requirement for an AI risk assessment. These processes institutionalize governance, rather than relying on ad-hoc consideration.

- Tools and Technical Measures: The companies invest in technical tools to audit and improve AI (bias testing frameworks, explainability tools, model documentation templates). This is crucial because AI governance at scale needs automation support.

- Continuous Education and Adaptation: They all emphasize training employees (Toyota educating teams on GenAI, Microsoft training on responsible AI, Sony ongoing education principle) and updating policies to handle new AI developments (Sony adding genAI rules, Hitachi updating for external services, Google adjusting for new model types)cdomagazine.techhitachi.com. AI governance isn’t static – it’s an evolving program.

In sectors like finance, healthcare, or automotive, we see domain-specific implementations too (e.g. banks creating model risk management frameworks for AI, hospitals having algorithm committees), but broadly they mirror these practices. For smaller companies and startups, the governance might be less formal – perhaps just a set of guiding principles and code reviews – but even they are increasingly adopting similar elements (many startups, for example, publish AI ethics charters or form advisory boards).

It’s worth noting that corporate AI governance is sometimes driven by external partnerships: for instance, several of these companies are members of the Partnership on AI, an industry consortium that develops best practices on AI ethics; many also contribute to standards bodies (ISO/IEC JTC1 SC42 on AI) or policy advocacy (Microsoft and Google have called for AI regulations and published white papers on governance). This shows that leading companies not only govern their own AI use, but try to shape the broader governance ecosystem, which in turn influences how they refine their internal frameworks.

4. Methodologies and Steps for Building AI Governance

Implementing AI governance in an organization can seem daunting, but various frameworks and experts have converged on a set of practical steps and methodologies. The process is analogous to setting up any strong governance or compliance program (like for data privacy or IT security), but tailored to the unique aspects of AI. Below, we outline a strategic approach to building AI governance, from initial planning through ongoing management, with notes on scaling for different organization sizes.

Step 1: Define Governance Scope and Objectives – Start by establishing what AI governance means for your organization. This involves identifying the AI systems and use cases currently in use or planned (e.g. AI in analytics, customer service chatbots, machine learning models in products) and understanding the potential risks and impact of each. Many organizations conduct an enterprise-wide AI inventory or audit: essentially, ask all departments what AI or automated decision systems they use, including “shadow AI” (tools employees might use without formal approval)iapp.org. This inventory lays the foundation for governance because you can’t control what you don’t know exists. Next, clarify the objectives of your AI governance program. Common objectives include ensuring compliance with laws, preventing harm or bias, aligning AI with corporate values, and achieving consistency in AI development practices. At this stage, it’s wise to articulate high-level AI Principles or Policy Statements that reflect these objectives (or adopt existing ones from external frameworks). If your organization already has ethical guidelines or a code of conduct, consider how AI principles integrate – for example, adding AI clauses about non-discrimination and transparency. The tone from the top is crucial: management should communicate that the purpose of AI governance is not to hinder innovation, but to “align AI technology with business goals, customer expectations, and legal standards”fisherphillips.com, ultimately enabling sustainable AI adoption.

Step 2: Establish an AI Governance Structure – Determine who will be responsible for AI governance. Many companies find it effective to create a cross-functional AI governance committee or working groupfisherphillips.com. This group should include representatives from relevant areas: IT/AI development teams, data science or analytics, compliance/legal, risk management, and business unit leaders that use AI, and possibly HR or communications if AI affects employees or customer messaging. The committee’s role is to oversee the governance rollout, make policy decisions (e.g. approve the AI principles, decide on tools to use), and serve as an escalation point for AI-related issues. For smaller organizations, a full committee might not be feasible – instead, they might designate an individual (like a Chief Data Officer or an Ethics Officer if one exists, or the head of IT/R&D) as the AI Governance Lead, who can then consult with an informal team as needed. The key is to ensure both technical expertise and ethical/legal perspective are involved in oversighttranscend.io. Define clear roles and responsibilities: for instance, Who reviews an AI system for ethical risks before deployment? Who is accountable if an AI system causes an incident? Some companies assign specific roles like “AI Product Owner” (responsible for an AI solution’s compliance and performance), “Data Steward” (ensuring data governance for AI training data), or “Model Validator/Auditor” (independent reviewer of models)fisherphillips.com. Embedding accountability is vital – one principle of governance is that humans remain accountable for AI outcomesfisherphillips.commicrosoft.com, so there must be a chain of responsibility from the AI system back to a person or team. Document this structure in an AI governance charter that spells out the committee’s mandate or the leader’s authority, and how it integrates with existing governance (for example, the AI committee may report into the overall Risk Management Committee or to the CTO).

Step 3: Develop AI Policy Framework and Guidelines – With structure in place, the organization should create the detailed policies, standards, and procedures that will govern AI activities. Many elements can be modeled after existing governance domains:

- AI Ethics/Principles Document: A formal policy that outlines the organization’s AI principles (transparency, fairness, etc.) and any sector-specific ethical considerations. It can also reference external codes (like saying the company adheres to OECD or national principles). This sets the “north star” for AI use.

- Acceptable Use Guidelines: If the company allows employees to use AI tools (like generative AI assistants), guidelines should specify how to do so responsibly – e.g. don’t input confidential data into public AI services, verify AI-generated content before using it, etc. Sony’s 2023 generative AI guidelines or Hitachi’s internal rules are examples of such policy to avoid misusesony.comhitachi.com.

- AI Development Standards: Define requirements that every AI project must fulfill. For instance, Microsoft’s Responsible AI Standard requires an impact assessment and certain documentation for every AI systemcdn-dynmedia-1.microsoft.comit1.com. Your standards might include: perform bias testing on models above X users, ensure an explanation method is available for decision-making AI, perform security testing on AI APIs, maintain human override for critical decisions, etc. These become checklist items for teams.

- Data Governance and Privacy: Reinforce data management practices specifically for AI – ensuring datasets used for training are legally collected, with minimal bias, and stored securely. Outline rules like requiring anonymization of personal data before using it in AI model training (to comply with privacy laws), and engaging the privacy office or data protection officer in AI projects earlyibanet.org. A strong data governance plan supports AI governanceiapp.org, since many AI issues trace back to data quality.

- Procurement and Third-Party AI: If using third-party AI solutions or APIs, policies should require due diligence on those (checking if the vendor has ethical standards, what data their model was trained on, any bias or security evaluations).

- Monitoring and Auditing Procedures: Set expectations for ongoing monitoring of AI systems (error rates, bias metrics drift, etc.) and periodic audits. Also define incident response steps if an AI failure occurs (e.g. if AI produces a serious error or policy violation, who must be notified and how to remediate).

All these policies should be compiled in an AI governance manual or handbook accessible to employees. Simultaneously, update related policies (IT governance, model risk management if in finance, etc.) to reference AI considerations so nothing falls through the cracks. Crucially, keep the policies practical – the IAPP suggests that for SMBs, the initial policies should “set out responsibilities, roles and specific guardrails” in a simple, accessible wayiapp.org, rather than overly complex rules that might overwhelm a small team.

Step 4: Implement Training and Culture Programs – People are at the heart of governance. Even the best policies mean little if employees are not aware or capable of following them. So, develop a training program on AI governance. This can be tiered: a general awareness training for all staff (covering basic AI concepts, the company’s AI principles, do’s and don’ts of AI use) and more detailed training for technical teams and project managers who work directly with AI. For example, ensure that developers know how to use bias detection tools or that product managers know how to fill out an AI risk assessment form. Include real scenarios – e.g., walk through a case of an AI model that inadvertently discriminated and how governance practices catch and fix it. Regular training (at least annually) keeps knowledge freshfisherphillips.comfisherphillips.com. Also, nurture an ethical AI culture: encourage employees to speak up if they notice an AI behaving oddly or decisions that seem unethical (akin to a “speak-up” culture in compliance). Some companies incorporate discussions of AI ethics into their innovation process or hold workshops. An idea is to include AI governance as part of onboarding new engineers or data scientists, so from day one they consider ethics and compliance as part of their job, not an external imposition. Leadership should reinforce this culture by example – e.g. executives mentioning responsible AI in communications, and rewarding teams who find and mitigate an AI risk.

Step 5: Integrate Governance into Project Lifecycle – One of the most important methodological shifts is to embed AI governance “by design” into the AI system lifecycle, rather than checking at the end. This is similar to the concept of “privacy by design” or “security by design”. Concretely, this means at project inception or ideation, teams should include an AI risk/benefit analysis: Why use AI for this task? What could go wrong? At design and development phases, apply tools and best practices: e.g., use diverse training data, document data lineage, apply fairness toolkits to the model during testingfisherphillips.comfisherphillips.com. During validation, involve a review by the AI governance committee or an independent reviewer not on the core team – to audit for adherence to principles. Have checkpoints/milestones tied to governance, such as a go/no-go approval gate where a checklist of governance items must be signed off (e.g., “We have tested for bias – results acceptable; We have an explanation method – documented; Legal has reviewed for compliance – ok”). Some organizations use AI model cards or fact sheets: documents accompanying a model that list its intended use, performance metrics, fairness metrics, limitations, and ethical considerationspublicpolicy.google. These are useful for both internal review and external transparency. It’s also advisable to involve end-users or stakeholders in the testing phase to get feedback on whether the AI is behaving fairly and usefully. By deployment, ensure that any required user communications are in place (such as disclaimers “this chatbot is AI-powered” or obtaining user consent where needed). Post-deployment, set up a schedule for monitoring: e.g., evaluate outcome disparities every quarter, retrain models with updated data annually, etc., and designate who will do this monitoring.

Step 6: Monitor, Audit, and Continually Improve – AI governance is an ongoing process. Organizations should treat AI systems as living systems that require continuous oversight. Establish metrics and KPIs for the governance program itself – for example, percentage of AI projects that completed an ethics review, number of AI incidents or near-misses reported, reduction in model bias over time, etc. Many companies conduct regular audits or assessments of their AI systems. This could be done by an internal audit team with relevant expertise or by external auditors/consultants for an independent check. In regulated industries, these audits may eventually be expected by regulators. The audit might review if the AI systems still conform to initial requirements, and if any drift or new risks have emerged. It also evaluates the effectiveness of governance processes – e.g., is the bias testing procedure actually catching issues? Findings should feed back into refining the governance documents or training. Additionally, keep an eye on external developments: new regulations (like keeping track of the EU AI Act guidelines as they develop, or new laws in your country), new industry best practices (e.g., standards released by ISO or NIST updates), and advances in AI ethics research (perhaps new techniques to explain black-box models). The governance committee or leader should have a process to “stay informed on AI governance trends”fisherphillips.com – this might mean subscribing to industry newsletters, participating in AI governance forums, or consulting legal counsel about upcoming laws. When changes happen, update internal policies accordingly. For example, if a law now requires that users can opt-out of AI decisions, ensure your processes incorporate that user right.

Step 7: Adapt for Scale (SMEs and Large Enterprises) – The methodologies above are general, but scaling them depends on organizational size and resources. For large enterprises, you may have the capacity to have multiple layers (like Microsoft’s multi-tier governance with an Office of Responsible AI, an oversight committee, and “champions” in each team). Large companies can also consider external advisory boards for AI ethics, bringing in academic or civil society experts periodically to review and advise (some have tried this to gain outside perspective). In contrast, small and medium-sized enterprises (SMEs) should not be deterred by resource constraints – AI governance can be right-sized. As experts note, SMBs can manage AI responsibly “without creating new departments or hiring ethicists and lawyers” by leveraging existing staff and focusing on the basicsiapp.org. For instance, an SME’s CTO or CIO might double-hat as the AI governance officer. They can use off-the-shelf tools and frameworks (like adopting the open-source AI Verify toolkit for testing models, rather than developing their own tests)pdpc.gov.sg. They might enforce a simpler checklist (covering the critical points: fairness, privacy, security, accountability) for all AI tools they use. The key for smaller organizations is to start the governance conversation early – even informal practices are better than nothing – and then formalize more as they grow. Many governance frameworks encourage a maturity model approach: you can start at an “initial” level with ad-hoc measures and work towards a “managed” or “optimized” level as your AI use deepens.

Additionally, templates and communities can help. SMEs can reference guidelines like Singapore’s Model Framework or industry association templates to draft their policies. They can join industry groups or coalitions for responsible AI to share knowledge. The IAPP suggests that SMB leaders at least “ask the right questions, put foundational guardrails in place, and grow AI capacity confidently” as a pragmatic starting pointiapp.orgiapp.org. For example, a foundational guardrail could be: never deploy an AI model that hasn’t been peer-reviewed by another engineer or tested on diverse data – a simple rule that can prevent obvious issues.

In method terms, some frameworks break down AI governance implementation into phases like: Plan –> Implement –> Validate –> Evolve. This mirrors the steps described. Plan (steps 1–3 above) covers setting up structures and policies. Implement (steps 4–5) covers training and embedding into lifecycle. Validate (step 6) covers monitoring and auditing. Evolve (step 6–7) covers adapting and scaling with experience and external changes.

It’s worth highlighting a few example frameworks and how they align to these steps:

- The NIST AI RMF suggests starting with the Govern function (aligns to steps 1–3, setting governance processes and organizational context), then Map, Measure, Manage which align to identifying context, assessing risks, and controlling them – very similar to performing risk assessment (identify use cases, map context), measuring (testing, validation), and managing (mitigations, oversight) continuouslyhyperproof.io.

- Singapore’s Model AI Governance Framework provides concrete practices such as establishing “Internal governance structures and measures” (roles, SOPs, training)pdpc.gov.sg, “Determining level of human involvement” (decide how human oversight will work)pdpc.gov.sg, and “Operations management” (monitoring biases, having a risk-based approach to explainability and robustness)pdpc.gov.sg – all of which map onto the steps above. Essentially: get structure and people in place, figure out human/AI decision balance, and manage the operations with bias mitigation, etc.

- The Fisher Phillips 10-step guide (2024), targeted at businesses standing up AI governance, aligns well too: it covers understanding AI governance, forming a committee, documenting AI use cases, bias-checking mechanisms, accountability pathways, scenario planning (thinking through worst-cases), audits and training, documentation of decisions, staying informed, and partnering with expertsfisherphillips.comfisherphillips.comfisherphillips.comfisherphillips.comfisherphillips.com. We have woven most of these concepts into our generic steps. For example, their point on “Use real-world scenarios to create guardrails” is a useful exercise – as part of Step 3 or 5, one can run scenario analyses (like “what if our AI malfunctions in this way – how can we prevent or mitigate that?”)fisherphillips.comfisherphillips.com. This kind of red-teaming or pre-mortem analysis can strengthen policy and design.

In building AI governance, it’s also important to align it with the organization’s overall risk management and corporate governance strategies. Many companies are starting to include AI risks in their enterprise risk registers and having board-level discussions on AI. In fact, boards of directors are increasingly expected to oversee AI strategy and risk, akin to their duty with cybersecurity. Thus, part of the methodology is ensuring the board and top executives are educated on AI governance (perhaps via a board briefing or including AI governance in ESG reporting).

To summarize this section in actionable points, one can think of a checklist for building AI governance:

- ✅ Set AI Ethical Principles and secure leadership endorsement.

- ✅ Appoint responsible people/committee and define their mandate.

- ✅ Inventory AI use and conduct risk assessments.

- ✅ Draft and implement AI governance policies and procedures (covering design, deployment, monitoring).

- ✅ Embed these into project workflows and train all relevant staff.

- ✅ Monitor AI systems and compliance with policies; audit regularly.

- ✅ Review and update the governance program as technology and regulations evolve.

By following these steps, organizations create a governance framework that is robust yet flexible. Importantly, effective AI governance is not about saying “No” to AI – it’s about enabling responsible AI innovation. As observed, those that invest in governance often find it streamlines AI adoption because it builds trust and clarity. Also, there are “quantifiable benefits”: reducing failures, avoiding legal fines, improving AI performance by eliminating bad biases, and even reputational advantages with customersiapp.org. This pragmatic, structured approach ensures AI solutions can be scaled in organizations in a way that is ethical, compliant, and aligned with business values.

5. Future Outlook and Challenges

As AI capabilities accelerate and become more entwined with business and society, the field of AI governance is entering a dynamic new phase. We can anticipate significant developments in how governance frameworks evolve, as well as challenges that organizations and regulators will need to overcome. Here we analyze some key aspects of the future outlook: